AMD GPUs are famous for working very well on Linux. However, what about the very first GCN GPUs? Are they working as well as the new ones? In this post, I’m going to summarize how well these old GPUs are supported and what I’ve been doing to improve them.

This story is about the first two generations of GCN: Southern Islands (aka. SI, GCN1, GFX6) and Sea Islands (aka. CIK, GCN2, GFX7).

While AMD GPUs generally have a good reputation on Linux, these old GCN graphics cards have been a sore spot for as long as I’ve been working on the driver stack.

It occurred to me that resolving some of the long-standing issues on these old GPUs might be a great way to get me started on working on the amdgpu kernel driver and would help improve the default user experience of Linux users on these GPUs. I figured that it would give me a good base understanding, and later I could also start contributing code and bug fixes to newer GPUs.

The RADV team has supported RADV on SI and CIK GPUs for a long time. RADV support was already there even before I joined the team in mid-2019. Daniel added ACO support for GFX7 in November 2019, and Samuel added ACO support for GFX6 in January 2020. More recently, Martin added a Tahiti (GFX6) and Hawaii (GFX7) GPU to the Mesa CI which are running post-merge “nightly” jobs. So we can catch regressions and test our work on these GPUs quite quickly.

The kernel driver situation was less fortunate.

On the kernel side, amdgpu (the newer kernel driver) has supported CIK since June 2015 and SI since August 2016. DC (the new display driver) has supported CIK since September 2017 (the beginning), and SI support was added in July 2020 by Mauro. However, the old radeon driver was the default driver. Unfortunately, radeon doesn’t support Vulkan, so in the default user experience, users couldn’t play most games or benefit from any of the Linux gaming related work we’ve been doing for the last 10 years.

In order to get working Vulkan support on SI and CIK, we needed to use the following kernel params:

radeon.si_support=0 radeon.cik_support=0 amdgpu.si_support=1 amdgpu.cik_support=1

Then, you could boot with amdgpu and enjoy a semblance of a good user experience until the GPU crashed / hung, or until you tried to use some functionality which was missing from amdgpu, or until you plugged in a display which the display driver couldn’t handle.

It was… not the best user experience.

The first question that came to mind is, why wasn’t amdgpu the default kernel driver for these GPUs? Since the “experimental” support had been there for 10 years, we had thought the kernel devs would eventually just enable amdgpu by default, but that never happened. At XDC 2024 I met Alex Deucher, the lead developer of amdgpu and asked him what was missing. Alex explained to me that the main reason the default wasn’t switched is to avoid regressions for users who rely on some functionality not supported by amdgpu:

It doesn’t seem like much, does it? How hard can it be?

I messaged Alex Deucher to get some advice on where to start. Alex was very considerate and helped me to get a good understanding of how the code is organized, how the parts fit together and where I should start reading. Harry Wentland also helped a lot with making a plan how to fit analog connectors in DC. Then, I plugged my monitors into my Raphael iGPU to be used as a primary GPU, then plugged in an old Oland card as a secondary GPU, and started hacking.

For the display, I decided that the best way forward is to add what is missing from DC for these GPUs and use DC by default. That way, we can eventually get rid of the legacy display code (which was always meant as a temporary solution until DC landed).

Additionally, I decided to focus on dedicated GPUs because these are the most useful for gaming and are easy to test using a desktop computer. There is still work left to do for CIK APUs.

Analog connectors have been actually quite easy to deal with, once I understood the structure of the DC (display core) codebase. I could use the legacy display code as a reference. The DAC (digital to analog converter) is actually programmed by the VBIOS, and the driver just needs to call the VBIOS to tell it what to do. Easier said than done, but not too hard.

It also turned out that some chips that already defaulted to DC (eg. Tonga, Hawaii) also have analog connectors, which apparently just didn’t work on Linux by default. I managed to submit the first version of this in July. Then I was sidetracked with a lot of other issues, so I submitted the second version of the series in September, which then got merged.

It is incredibly difficult to debug issues when you don’t have the hardware to reproduce them yourself. Some developers have a good talent for writing patches to fix issues without actually seeing the issue, but I feel I still have a long way to go to be that good. It was pretty clear from the beginning that the only way to make sure my work actually works on all SI/CIK GPUs is to test all of them myself.

So, I went ahead an acquired at least one of each SI and CIK chip. I got most of them from used hardware ad sites, and Leonardo Frassetto sent me a few as well.

After I got the analog connector working using the old GPUs as a

secondary GPU, I thought it’s time to test how well it works

as a primary GPU. You know, the way most actual users would use them.

So I disabled the iGPU and booted my computer with each dGPU with amdgpu.dc=1 to see what happens.

This is where things started going crazy…

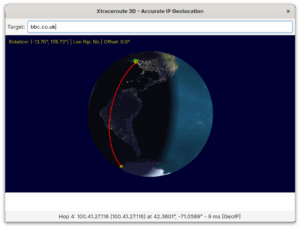

The way to debug these problems is the following:

amdgpu.dc=0, dump all DCE registers using umr:

umr -r oland.dce600..* > oland_nodc_good.txtamdgpu.dc=1, dump all DCE registers using umr:

umr -r oland.dce600..* > oland_dc_bad.txtI decided to fix the bugs before adding new features. I sent a few patch series to address a bunch of display issues mainly with SI (DCE6):

I noticed that HDMI audio worked alright on all GPUs with DC (as expected), however DP audio didn’t (which was unexpected). However it worked when both DP and HDMI were plugged in… After consulting with Alex and doing some trial and error, it turned out that this was just due to setting some clock frequency in the wrong way: Fix DP audio DTO1 clock source on DCE6.

In order to figure out the correct frequencies, I wrote a script that set the frequency using umr then played a sound. I just laid back and let the script run until I heard the sound. Then it was just a matter of figuring out why that frequency was correct.

A small fun fact: it turns out that DP audio on Tahiti didn’t work on any Linux driver before. Now it works with DC.

The DCE (Display Controller Engine), just like other parts of the GPU, has its own power requirements and needs certain clocks, voltages, etc. It is the responsibility of the power management code to make sure DCE gets the power it needs. Unfortunately, DC didn’t talk to the legacy power management code. Even more unfortunately, the power management code was buggy so that’s what I started with.

After I was mostly done with SI, I also fixed an issue with CIK, where the shutdown temperature was incorrectly reported.

Video encoding is usually an afterthought, not something that most users think about unless they are interested in streaming or video transcoding. It was definitely an afterthought for the hardware designers of SI, which has the first generation VCE (video coding engine) that only supports H264 and only up to 2048 x 1152. However, the old radeon kernel driver supports this engine and for anyone relying on this functionality, it would be a regression when switching to amdgpu. So we need to support it. There was already some work by Alexandre Demers to support VCE1, but that work was stalled due to issues caused by the firmware validation mechanism.

In order to switch SI to amdgpu by default, I needed to deal with VCE1. So I started a conversation with Christian König (amdgpu expert) to identify what the problem actually was, and with Alexandre to see how far along his work was.

Christian helped me a lot with understanding how the memory controller and the page table work.

After I got over the headache, I came up with this idea:

With that out of the way, the rest of the work on VCE1 was pretty straightforward. I could use Alexandre’s research, as well as the VCE2 code from amdgpu and the VCE1 code from radeon as a reference. Finally, a few reviews and three revisions later, the VCE1 series was accepted.

In the current economic situation of our world, I expect that people are going to use GPUs for much longer, and replace them less often. And when an old GPU is replaced, it doesn’t die, it goes to somebody who upgrades an even older GPU. Eventually it will reach somebody that can’t afford a better one. There are some efforts to use Linux to keep old computers alive, for example this one. My goal with this work is to make Linux gaming a good experience also for those who use old GPUs.

Other than that, I also did it for myself. Not because I want to run old GPUs myself, but because it has been a great learning experience to get into the amdgpu kernel driver.

The open source community including AMD themselves as well as other entities like Valve, Igalia, Red Hat etc. have invested a lot of time and effort into amdgpu and DC, which now support many generations of AMD GPUs: GCN1-5, RDNA1-4, as well as CDNA. In fact amdgpu supports more generations of GPUs now than what came before, and it looks like it will support many generations of future GPUs.

By making amdgpu work well with SI and CIK, we ensure that these GPUs remain competently supported for the foreseeable future.

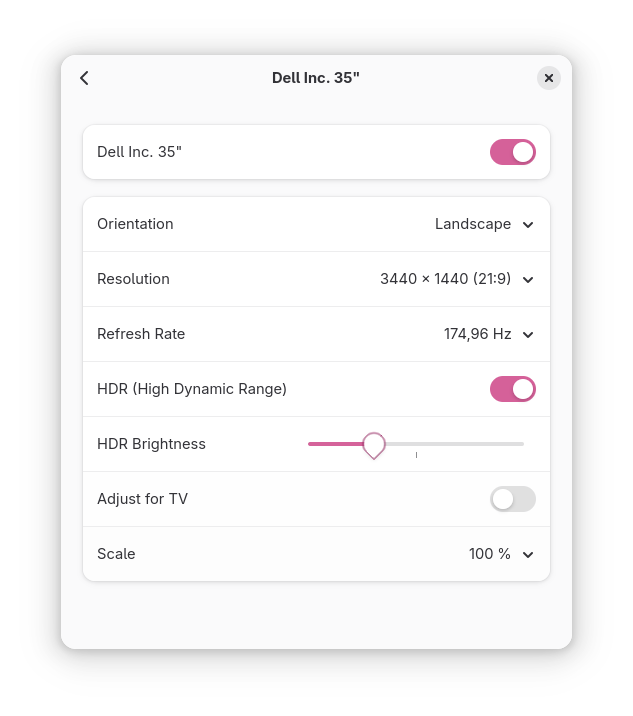

By switching SI and CIK to use DC by default, we enable display features like atomic modesetting, VRR, HDR, etc. and this also allows the amdgpu maintainers to eventually move on from the legacy display code without losing functionality.

Now that amdgpu is at feature parity with radeon on old GPUs, we switched the default to amdgpu on SI and CIK dedicated GPUs. It’s time to start thinking about what else is left to do.

dmesg log will mention if the APU uses TRAVIS or NUTMEG.Kernel development is not as scary as it looks. It is a different technical challenge than what I was used to, but not in a bad way. Just needed to figure out a good workflow for how to configure a code editor, as well as what is a good way to test my work without rebuilding everything all the time.

AMD engineers have been very friendly and helpful to me all the way. Although there are a lot of memes and articles on the internet about attitude and rude/toxic messages by some kernel developers, I didn’t see that in amdgpu at least.

My approach was that even before I wrote a single line of code, I started talking to the maintainers (who would eventually review my patches) to find out what would be the good solution to them and how to get my work accepted. Communicating with the maintainers saved a lot of time and made the work faster, more pleasant and more collaborative.

Sadly, there is a huge latency between a Linux kernel developer working on something and the work reaching end users. Even if the patches are accepted quickly, it can take 3~6 months until users can actually use it.

In hindsight, if I had focused on finishing the analog support and VCE1 first (instead of fixing all the bugs I found), my work would have ended up in Linux 6.18 (including the bug fixes, as there is no deadline for those). Due to how I prioritized bug fixing, the features I’ve developed are only included in Linux 6.19, so this will be the version where SI and CIK will default to amdgpu by default.

I presented a lightning talk on this topic at XDC 2025, where I talked about the state of SI and CIK support as of September 2025. You can find the slide deck here and the video here.

I’d like to say a big thank you to all of these people. All of the work I mentioned in this post would not have been possible without them.

When in doubt, consult Wikipedia.

GFX6 aka. GCN1 - Southern Islands (SI) dedicated GPUs: amdgpu is now the default kernel driver as of Linux 6.19. The DC display driver is now the default and is now usable for these GPUs. DC now supports analog connectors, power management is less buggy, and video encoding is now supported by amdgpu.

GFX7 aka. GCN2 - Sea Islands (CIK) dedicated GPUs: amdgpu is now the default kernel driver as of Linux 6.19. The DC display driver is now the default for Bonaire (was already the case for Hawaii). DC now supports analog connectors. Minor bug fixes.

GFX8 aka. GCN3 - Volcanic Islands (VI) dedicated GPUs:

DC now supports analog connectors.

(Note that amdgpu and DC were already supported on these GPUs since release.)

On Dec 17 we released systemd v259 into the wild.

In the weeks leading up to that release (and since then) I have posted a series of serieses of posts to Mastodon about key new features in this release, under the #systemd259 hash tag. In case you aren't using Mastodon, but would like to read up, here's a list of all 25 posts:

I intend to do a similar series of serieses of posts for the next systemd release (v260), hence if you haven't left tech Twitter for Mastodon yet, now is the opportunity.

My series for v260 will begin in a few weeks most likely, under the #systemd260 hash tag.

In case you are interested, here is the corresponding blog story for systemd v258, here for v257, and here for v256.

After introducing how graphics drivers work in general, I’d like to give a brief overview about what is what in the Linux graphics stack, what are the important parts and what the key projects are where the development happens, as well as what you need to do to get the best user experience out of it.

Please refer to my previous post for a more detailed general explanation on graphics drivers in general. This post focuses on how things work in the open source graphics stack on Linux.

We have open source drivers for the GPUs from all major manufacturers with varying degrees of success.

The components you need in order to get your GPU working on open source drivers on a Linux distro are the following:

To make your GPU work, you need new enough versions of the Linux kernel, linux-firmware and Mesa (and LLVM) that include support for your GPU.

To make your GPU work well, I highly recommend to use the latest stable versions of all of the above. If you use old versions, you are missing out. By using old versions you are choosing not to benefit from the latest developments (features and bug fixes) that open source developers have worked on, and you will have a sub-par experience (especially on relatively new hardware).

If you read Reddit posts, you will stumble upon some people who believe that “the drivers are in the kernel” on Linux. This is a half-truth. Only the KMDs are part of the kernel, everything else (linux-firmware, Mesa, LLVM) is distributed in separate packges. How exactly those packages are organized, depends on your distribution.

Mesa is a collection of userspace drivers which implement various different APIs. It is the cornerstone of the open source graphics stack. I’m going to attempt to give a brief overview of what are the most relevant parts of Mesa.

An important part of Mesa is the Gallium driver infrastructure, which contains a lot of common code for implementing different APIs, such as:

Mesa also contains a collection of Vulkan drivers. Originally, Vulkan was deemed “lower level than Gallium”, so Vulkan drivers are not part of the Gallium driver infrastructure. However, Vulkan has a lot of overlapping functionality with the aforementioned APIs, so Vulkan drivers still share a lot of code with their Gallium counterparts when appropriate.

Another important part of Mesa is the NIR shader compiler stack, which is at the heart of every Mesa driver that is still being maintained. This enables sharing a lot of compiler code across different drivers. I highly recommend Faith Ekstrand’s post In defense of NIR to learn more about it.

Technically they are not drivers, but in practice, if you want to run Windows games, you will need a compatibility layer like Wine or Proton, including graphics translation layers. The recommended ones are:

Those are default in Proton and offer the best performance. However, for “political” reasons, these are sadly not the defaults in Wine, so either you’ll have to use Proton or make sure to install the above in Wine manually.

Just for the sake of completeness, I’ll also mention the Wine defaults:

Despite the X server being abandoned for a long time, there is still a debate between Linux users whether to use a Wayland compositor or the X server. I’m not going to discuss the advantages and disadvantages of these, because I don’t participate in their development and I feel it has already been well-explained by the community.

I’m just going to say that it helps to choose a competent compositor that implements direct scanout. This means that the frames as rendered by your game can be sent directly to the display without the need for the compositor to do any additional processing on it.

In this blog post I focus on just the driver stack, because that is largely shared between both solutions.

Sadly, many Linux distributions choose to ship old versions of the kernel and/or other parts of the driver stack, giving their users a sub-par experience. Debian, Ubuntu LTS and their derivatives like Mint, Pop OS, etc. are all guilty of this. They justify this by claiming that older versions are more reliable, but this is actually not true.

In reality, us driver developers as well as the developers of the API translation layers work hard to implement new features that are needed to get new games working, as well as fixing bugs that are exposed by new games (or updates of old games).

Regressions are real, but they are usually quickly fixed thanks to the extensive testing that we do: every time we merge new code, our automated testing system runs the full Vulkan conformance test suite to make sure that all functionality is still intact, thanks to Martin’s genious.

Hi all!

This month the new KMS plane color pipeline API has finally been merged! It took multiple years and continued work and review by engineers from multiple organizations, but at last we managed to push it over the finish line. This new API exposes to user-space new hardware blocks: these applying color transformations before blending multiple KMS planes as a final composited image to be sent on the wire. This API unlocks power-efficient and low-latency color management features such as HDR.

Still, much remains to be done. Color pipelines are now exposed on AMD and

VKMS, Intel and other vendors are still working on their driver implementation.

Melissa Wen has written a drm_info patch to show pipeline

information, some more work is needed to plumb it through drmdb. Some patches

have been floated to leverage color pipelines for post-blending transforms too

(currently KMS only supports a fixed rudimentary post-blending pipeline with

two LUTs and one 3×3 matrix).

On the wlroots side, Félix Poisot has redesigned the way post-blending color transforms are applied by the renderer. The API used to be a mix of descriptive (describing which primaries and transfer functions the output buffer uses) and prescriptive (passing a list of operations to apply). Now it’s fully prescriptive, which will help for offloading these transformations to the DRM backend.

GnSight has contributed support for the

wlr-foreign-toplevel-management-v1 protocol to the Cage kiosk compositor.

This enables better control over windows running inside the compositor:

external tools can close or bring windows to the front.

mhorky has added client support for one-way method calls to go-varlink, as

well as a nice Registry enhancement to add support for the

org.varlink.service interface for free, for discovery and introspection of

Varlink services. Now that the module is feature-complete I’ve released version

0.1.0.

delthas has introduced support for authenticating with the soju IRC bouncer via TLS client certificates. He has contributed a simple audio recorder to the Goguma mobile IRC client, plus new buttons above the reaction list to be able to easily +1 another user’s reaction. Hubert Hirtz has sent a collection of bug fixes and has added a button to reveal the password field contents on the connection screen.

I’ve resurrected work on some old projects I’d almost forgotten about. I’ve pushed a few patches for libicc, adding support for encoding multi-process transforms, luminance and metadata. I’ve added a basic test suite to libjsonschema, and improved handling of objects and arrays without enough information to automatically generate types from.

But the old project I’ve spent most of my time on is go-mls, a Go implementation of the Messaging Layer Security (MLS) protocol. MLS is an end-to-end encryption protocol for chat messages. My goal is twofold: learn how MLS works under the hood (implementing something is one of the best ways for me to understand that something), and lay the groundwork for a future end-to-end encryption IRC extension. This month I’ve fixed up the remaining failures in the test suite and I’ve implemented just enough to be able to create a group, add members to it, and exchange an encrypted message. I’ll work on remaining group operations (e.g. removing a member) next.

Last, I’ve migrated FreeDesktop’s Mailman 3 installation to PostgreSQL from SQLite. Mailman 3’s SQLite integration had pretty severe performance issues, these are gone with PostgreSQL. The migration wasn’t straightforward: there is no tooling to migrate Mailman 3 core’s data between database engines, so I had to manually fill the new database with the old data. I’ve migrated two more mailing lists to Mailman 3: fhs and nouveau. I plan to continue the migration in the coming months, and hopefully we’ll be able to decommission Mailman 2 in a not-so-distant future.

See you next year!

I’d like to give an overview on how graphics drivers work in general, and then write a little bit about the Linux graphics stack for AMD GPUs. The intention of this post is to clear up a bunch of misunderstandings that people have on the internet about open source graphics drivers.

A graphics driver is a piece of software code that is written for the purpose of allowing programs on your computer to access the features of your GPU. Every GPU is different and may have different capabilities or different ways of achieving things, so they need different drivers, or at least different code paths in a driver that may handle multiple GPUs from the same vendor and/or the same hardware generation.

The main motivation for graphics drivers is to allow applications to utilize your hardware efficiently. This enables games to render pretty pixels, scientific apps to calculate stuff, as well as video apps to encode / decode efficiently.

Compared to drivers for other hardware, graphics is very complicated because the functionality is very broad and the differences between each piece of hardware can be also vast.

Here is a simplified explanation on how a graphics driver stack usually works. Note that most of the time, these components (or some variation) are bundled together to make them easier to use.

I’ll give a brief overview of each component below.

Most GPUs have additional processors (other than the shader cores) which run a firmware that is responsible for operating the low-level details of the hardware, usually stuff that is too low-level even for the kernel.

The firmware on those processors are responsible for: power management, context switching, command processing, display, video encoding/decoding etc. Among other things it parses the commands we submitted to it, launches shaders, distributes work between the shader cores etc.

Some GPU manufacturers are moving more and more functionality to firmware, which means that the GPU can operate more autonomously and less intervention is needed by the CPU. This tendency is generally positive for reducing CPU time spent on programming the GPU (as well as “CPU bubbles”), but at the same time it also means that the way the GPU actually works becomes less transparent.

You might ask, why not implement all driver functionality in the kernel? Wouldn’t it be simpler to “just” have everything in the kernel? The answer is no, mainly because there is a LOT going on which nobody wants in the kernel.

So, usually, the KMD is only left with some low-level tasks that every user needs:

Applications interact with userspace drivers instead of the kernel (or the hardware directly). Userspace drivers are compiled as shared libraries and are responsible for implementing one or more specific APIs for graphics, compute or video for a specific family of GPUs. (For example, Vulkan, OpenGL or OpenCL, etc.) Each graphics API has entry points which load the available driver(s) for the GPU(s) in the user’s system. The Vulkan loader is an example of this; other APIs have similar components for this purpose.

The main functionality of a userspace driver is to take the commands from the API (for example, draw calls or compute dispatches) and turn them into low level commands in a binary format that the GPU can understand. In Vulkan, this is analogous to recording a command buffer. Additionally, they utilize a shader compiler to turn a higher level shader language (eg. GLSL) or bytecode (eg. SPIR-V) into hardware instructions which the GPU’s shader cores can execute.

Furthermore, userspace drivers also take part in memory management, they basically act as an interface between the memory model of the graphics API and kernel’s memory manager.

The userspace driver calls the aforementioned kernel uAPI to submit the recorded commands to the kernel which then schedules it and hands it to the firmware to be executed.

If you’ve seen a loading screen in your favourite game which told you it was “compiling shaders…” you probably wondered what that’s about and why it’s necessary.

Unlike CPUs which have converged to a few common instruction set architectures (ISA), GPUs are a mess and don’t share the same ISA, not even between different GPU models from the same manufacturer. Although most modern GPUs have converged to SIMD based architectures, the ISA is still very different between manufacturers and it still changes from generation to generation (sometimes different chips of the same generation have slightly different ISA). GPU makers keep adding new instructions when they identify new ways to implement some features more effectively.

To deal with all that mess, graphics drivers have to do online compilation of shaders (as opposed to offline compilation which usually happens for apps running on your CPU).

This means that shaders have to be recompiled when the userspace graphics driver is updated either because new functionality is available or because bug fixes were added to the driver and/or compiler.

On some systems (especially proprietary operating systems like Windows), GPU manufacturers intend to make users’ lives easier by offering all of the above in a single installer package, which is just called “the driver”.

Typically such a package includes:

On some systems (typically on open source systems like Linux distributions), usually you can already find a set of packages to handle most common hardware, so you can use most functionality out of the box without needing to install anything manually.

Neat, isn’t it?

However, on open source systems, the graphics stack is more transparent, which means that there are many parts that are scattered across different projects, and in some cases there is more than one driver available for the same HW. To end users, it can be very confusing.

However, this doesn’t mean that open source drivers are designed worse. It is just that due to their community oriented nature, they are organized differently.

One of the main sources of confusion is that various Linux distributions mix and match different versions of the kernel with different versions of different UMDs which means that users of different distros can get a wildly different user experience based on the choices made for them by the developers of the distro.

Another source of confusion is that we driver developers are really, really bad at naming things, so sometimes different projects end up having the same name, or some projects have nonsensical or outdated names.

In the next post, I’ll continue this story and discuss how the above applies to the open source Linux graphics stack.

Hey, I have been under distress lately due to personal circumstances that are outside my control. I cannot find a permanent job that allows me to function, I am not eligible for government benefits, my grant proposals to work on free and open-source projects got rejected, paid internships are quite difficult to find, especially when many of them prioritize new contributors. Essentially, I have no stable, monthly income that allows me to sustain myself.

Nowadays, I mostly volunteer to improve accessibility throughout GNOME apps, either by enhancing the user experience for people with disabilities, or enabling them to use them. I helped make most of GNOME Calendar accessible with a keyboard and screen reader, with additional ongoing effort involving merge requests !564 and !598 to make the month view accessible, all of which is an effort no company has ever contributed to, or would ever contribute to financially. These merge requests require literal thousands of hours for research, development, and testing, enough to sustain me for several years if I were employed.

I would really appreciate any kinds of donations, especially ones that happen periodically to increase my monthly income. These donations will allow me to sustain myself while allowing me to work on accessibility throughout GNOME, essentially ‘crowdfunding’ development without doing it on the behalf of the GNOME Foundation or another organization.

Together with my then-colleague Kalev Lember, I recently added support for pre-installing Flatpak applications. It sounds fancy, but it is conceptually very simple: Flatpak reads configuration files from several directories to determine which applications should be pre-installed. It then installs any missing applications and removes any that are no longer supposed to be pre-installed (with some small caveats).

For example, the following configuration tells Flatpak that the devel branch of the app org.test.Foo from remotes which serve the collection org.test.Collection, and the app org.test.Bar from any remote should be installed:

[Flatpak Preinstall org.test.Foo]

CollectionID=org.test.Collection

Branch=devel

[Flatpak Preinstall org.test.Bar]

By dropping in another confiuration file with a higher priority, pre-installation of the app org.test.Foo can be disabled:

[Flatpak Preinstall org.test.Foo]

Install=false

The installation procedure is the same as it is for the flatpak-install command. It supports installing from remotes and from side-load repositories, which is to say from a repository on a filesystem.

This simplicity also means that system integrators are responsible for assembling all the parts into a functioning system, and that there are a number of choices that need to be made for installation and upgrades.

The simplest way to approach this is to just ship a bunch of config files in /usr/share/flatpak/preinstall.d and config files for the remotes from which the apps are available. In the installation procedure, flatpak-preinstall is called and it will download the Flatpaks from the remotes over the network into /var/lib/flatpak. This works just fine, until someone needs one of those apps but doesn’t have a suitable network connection.

The next way one could approach this is exactly the same way, but with a sideload repository on the installation medium which contains the apps that will get pre-installed. The flatpak-preinstall command needs to be pointed at this repository at install time, and the process which creates the installation medium needs to be adjusted to create this repository. The installation process now works without a network connection. System updates are usually downloaded over the network, just as new pre-installed applications will be.

It is also possible to simply skip flatpak-preinstall, and use flatpak-install to create a Flatpak installation containing the pre-installed apps which get shipped on the installation medium. This installation can then be copied over from the installation medium to /var/lib/flatpak in the installation process. It unfortunately also makes the installation process less flexible because it becomes impossible to dynamically build the configuration.

On modern, image-based operating systems, it might be tempting to just ship this Flatpak installation on the image because the flexibility is usually neither required nor wanted. This currently does not work for the simple reason that the default system installation is in /var/lib/flatpak, which is not in /usr which is the mount point of the image. If the default system installation was in the image, then it would be read-only because the image is read-only. This means we could not update or install anything new to the system installation. If we make it possible to have two different system installations — one in the image, and one in /var — then we could update and install new things, but the installation on the image would become useless over time because all the runtimes and apps will be in /var anyway as they get updated.

All of those issues mean that even for image-based operating systems, pre-installation via a sideload repository is not a bad idea for now. It is however also not perfect. The kind of “pure” installation medium which is simply an image now contains a sideload repository. It also means that a factory reset functionality is not possible because the image does not contain the pre-installed apps.

In the future, we will need to revisit these approaches to find a solution that works seamlessly with image-based operating systems and supports factory reset functionality. Until then, we can use the systems mentioned above to start rolling out pre-installed Flatpaks.

F43 picked up the two patches I created to fix a bunch of deadlocks on laptops reported in my previous blog posting. Turns out Vulkan layers have a subtle thing I missed, and I removed a line from the device select layer that would only matter if you have another layer, which happens under steam.

The fedora update process caught this, but it still got published which was a mistake, need to probably give changes like this more karma thresholds.

I've released a new update https://bodhi.fedoraproject.org/updates/FEDORA-2025-2f4ba7cd17 that hopefully fixes this. I'll keep an eye on the karma.

XDC 2025 happened at the end of September, beginning of October this year, in Kuppelsaal, the historic TU Wien building in Vienna. XDC, The X.Org Developer’s Conference, is truly the premier gathering for open-source graphics development. The atmosphere was, as always, highly collaborative and packed with experts across the entire stack.

I was thrilled to present, together with my workmate Ella Stanforth, on the progress we have made in enhancing the Raspberry Pi GPU driver stack. Representing the broader Igalia Graphics Team that work on this GPU, Ella and I detailed the strides we have made in the OpenGL driver, though part of the improvements affect also the Vulkan driver.

The presentation was divided in two parts. In the first one, we talked about the new features that we were implementing, or are under implementation, mainly to make the driver more closely aligned with OpenGL 3.2. Key features explained were 16-bit Normalized Format support, Robust Context support, and Seamless cubemap implementation.

Beyond these core OpenGL updates, we also highlighted other features, such as NIR printf support, framebuffer fetch or dual source blend, which is important for some game emulators.

The second part was focused on specific work done to improve the performance. Here, we started with different traces from the popular GFXBench application, and explained the main improvements done throughout the year, with a look at how much each of these changes improved the performance for each of the benchmarks (or in average).

At the end, for some benchmarks we nearly doubled the performance compared to last year. I won’t explain here each of the changes done, But I encourage the reader to watch the talk, which is already available.

For those that prefer to check the slides instead of the full video, you can view them here:

Outside of the technical track, the venue’s location provided some excellent down time opportunities to have lunch at different nearby places. I need to highlight here one that I really enjoyed: An’s Kitchen Karlsplatz. This cozy Vietnamese street food spot quickly became one of my favourite places, and I went there a couple of times.

On the last day, I also had the opportunity to visit some of the most recomendable sightseeings spots in Vienna. Of course, one needs more than a half-day to do a proper visit, but at least it helps to spark an interest to write it down to pay a full visit to the city.

Meanwhile, I would like to thank all the conference organizers, as well as all the attendees, and I look forward to see them again.

Already on Sep 17 we released systemd v258 into the wild.

In the weeks leading up to that release I have posted a series of serieses of posts to Mastodon about key new features in this release, under the #systemd258 hash tag. It was my intention to post a link list here on this blog right after completing that series, but I simply forgot! Hence, in case you aren't using Mastodon, but would like to read up, here's a list of all 37 posts:

I intend to do a similar series of serieses of posts for the next systemd release (v259), hence if you haven't left tech Twitter for Mastodon yet, now is the opportunity.

We intend to shorten the release cycle a bit for the future, and in fact managed to tag v259-rc1 already yesterday, just 2 months after v258. Hence, my series for v259 will begin soon, under the #systemd259 hash tag.

In case you are interested, here is the corresponding blog story for systemd v257, and here for v256.

It has been a long time since I published any update in this space. Since this was a year of colossal changes for me, maybe it is also time for me to make something different with this blog and publish something just for a change — why not start talking about XDC 2025?

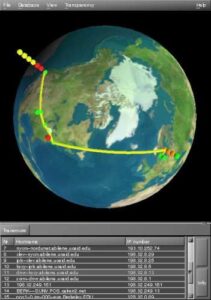

This year, I attended XDC 2025 in Vienna as an Igalia developer. I was thrilled to see some faces from people I worked with in the past and people I’m working with now. I had a chance to hang out with some folks I worked with at AMD (Harry, Alex, Leo, Christian, Shashank, and Pierre), many Igalians (Žan, Job, Ricardo, Paulo, Tvrtko, and many others), and finally some developers from Valve. In particular, I met Tímur in person for the first time, even though we have been talking for months about GPU recovery. Speaking of GPU recovery, we held a workshop on this topic together.

The workshop was packed with developers from different companies, which was nice because it added different angles on this topic. We began our discussion by focusing on the topic of job resubmission. Christian began sharing a brief history of how the AMDGPU driver started handling resubmission and the associated issues. After learning from erstwhile experience, amdgpu ended up adopting the following approach:

Below, you can see one crucial series associated with amdgpu recovery implementation:

The next topic was a discussion around the

replacement of drm_sched_resubmit_jobs() since this function became

deprecated. Just a few drivers still use this function, and they need a

replacement for that. Some ideas were floating around to extract part of the

specific implementation from some drivers into a generic function. The next

day, Philipp Stanner continued to discuss this topic in his workshop,

DRM GPU Scheduler.

Another crucial topic discussed was improving GPU reset debuggability to narrow down which operations cause the hang (keep in mind that GPU recovery is a medicine, not the cure to the problem). Intel developers shared their strategy for dealing with this by obtaining hints from userspace, which helped them provide a better set of information to append to the devcoredump. AMD could adopt this alongside dumping the IB data into the devcoredump (I am already investigating this).

Finally, we discussed strategies to avoid hang issues regressions. In summary, we have two lines of defense:

This year, as always, XDC was super cool, packed with many engaging presentations which I highly recommend everyone check out. If you are interested, check the schedule and the presentation recordings available on the X.Org Foundation Youtube page. Anyway, I hope this blog post marks the inauguration of a new era for this site, where I will start posting more content ranging from updates to tutorials. See you soon.

Hi!

This month a lot of new features have added to the Goguma mobile IRC client. Hubert Hirtz has implemented drafts so that unsent text gets saved and network disconnections don’t disrupt users typing a message. He also enabled replying to one’s own messages, changed the appearance of short messages containing only emoji, upgraded our emoji library to Unicode version 16, fixed some linkifier bugs and added unit tests.

Markus Cisler has added a new option in the message menu to show a user’s

profile. I’ve added an on-disk cache for images (with our own implementation,

because the widely used cached_network_image package is heavyweight). I’ve

been working on displaying network icons and blocking users, but that work is

not finished yet. I’ve also contributed some maintenance fixes for our

webcrypto.dart dependency (toolkit upgrades and CI fixes).

The soju IRC bouncer has also got some love this month. delthas has

contributed support for labeled-response for soju clients,

allowing more reliable matching of server replies with client commands. I’ve

introduced a new icon directive to configure an image representing the

bouncer. soju v0.10.0 has been released, followed by soju v0.10.1 including

bug fixes from Karel Balej and Taavi Väänänen.

In Wayland news, wlroots v0.19.2 and v0.18.3 have been released thanks to Simon Zeni. I’ve added support for the color-representation protocol for the Vulkan renderer, allowing clients to configure the color encoding and range for YCbCr content. Félix Poisot has been hard at work with more color management patches: screen default color primaries are now extracted from the EDID and exposed to compositors, the cursor is now correctly converted to the output’s primaries and transfer function, and some work-in-progress patches switch the renderer API from a descriptive model to a prescriptive model.

go-webdav v0.7.0 has been released with a patch from prasad83 to play well with Thunderbird. I’ve updated clients to make multi-status errors non-fatal, returning partial data alongside the error.

I’ve released drm_info v2.9.0 with improvements mentioned in the previous

status update plus support for the TILE connector property.

See you next month!

I had a bug appear in my email recently which led me down a rabbit hole, and I'm going to share it for future people wondering why we can't have nice things.

1. Get an intel/nvidia (newer than Turing) laptop.

2. Log in to GNOME on Fedora 42/43

3. Hotplug a HDMI port that is connected to the NVIDIA GPU.

4. Desktop stops working.

My initial reproduction got me a hung mutter process with a nice backtrace which pointed at the Vulkan Mesa device selection layer, trying to talk to the wayland compositor to ask it what the default device is. The problem was the process was the wayland compositor, and how was this ever supposed to work. The Vulkan device selection was called because zink called EnumeratePhysicalDevices, and zink was being loaded because we recently switched to it as the OpenGL driver for newer NVIDIA GPUs.

I looked into zink and the device select layer code, and low and behold someone has hacked around this badly already, and probably wrongly and I've no idea what the code does, because I think there is at least one logic bug in it. Nice things can't be had because hacks were done instead of just solving the problem.

The hacks in place ensured under certain circumstances involving zink/xwayland that the device select code to probe the window system was disabled, due to deadlocks seen. I'd no idea if more hacks were going to help, so I decided to step back and try and work out better.

The first question I had is why WAYLAND_DISPLAY is set inside the compositor process, it is, and if it wasn't I would never hit this. It's pretty likely on the initial compositor start this env var isn't set, so the problem only becomes apparent when the compositor gets a hotplugged GPU output, and goes to load the OpenGL driver, zink, which enumerates and hits device select with env var set and deadlocks.

I wasn't going to figure out a way around WAYLAND_DISPLAY being set at this point, so I leave the above question as an exercise for mutter devs.

At the point where zink is loading in mesa for this case, we have the file descriptor of the GPU device that we want to load a driver for. We don't actually need to enumerate all the physical devices, we could just find the ones for that fd. There is no API for this in Vulkan. I wrote an initial proof of concept instance extensions call VK_MESA_enumerate_devices_fd. I wrote initial loader code to play with it, and wrote zink code to use it. Because this is a new instance API, device-select will also ignore it. However this ran into a big problem in the Vulkan loader. The loader is designed around some internals that PhysicalDevices will enumerate in similiar ways, and it has to trampoline PhysicalDevice handles to underlying driver pointers so that if an app enumerates once, and enumerates again later, the PhysicalDevice handles remain consistent for the first user. There is a lot of code, and I've no idea how hotplug GPUs might fail in such situations. I couldn't find a decent path forward without knowing a lot more about the Vulkan loader. I believe this is the proper solution, as we know the fd, we should be able to get things without doing a full enumeration then picking the answer using the fd info. I've asked Vulkan WG to take a look at this, but I still need to fix the bug.

Maybe I can just turn off device selection, like the current hacks do, but in a better manner. Enter VK_EXT_layer_settings. This extensions allows layers to expose a layer setting in the instance creation. I can have the device select layer expose a setting which says don't touch this instance. Then in the zink code where we have a file descriptor being passed in and create an instance, we set the layer setting to avoid device selection. This seems to work but it has some caveats, I need to consider, but I think should be fine.

zink uses a single VkInstance for it's device screen. This is shared between all pipe_screens. Now I think this is fine inside a compositor, since we shouldn't ever be loading zink via the non-fd path, and I hope for most use cases it will work fine, better than the current hacks and better than some other ideas we threw around. The code for this is in [1].

If you have a vulkan compositor, it might be worth setting the layer setting if the mesa device select layer is loaded, esp if you set the DISPLAY/WAYLAND_DISPLAY and do any sort of hotplug later. You might be safe if you EnumeratePhysicalDevices early enough, the reason it's a big problem in mutter is it doesn't use Vulkan, it uses OpenGL and we only enumerate Vulkan physical devices at runtime through zink, never at startup.

AMD and NVIDIA I think have proprietary device selection layers, these might also deadlock in similiar ways, I think we've seen some wierd deadlocks in NVIDIA driver enumerations as well that might be a similiar problem.

[1] https://gitlab.freedesktop.org/mesa/mesa/-/merge_requests/38252

Yesterday I released Flatpak 1.17.0. It is the first version of the unstable 1.17 series and the first release in 6 months. There are a few things which didn’t make it for this release, which is why I’m planning to do another unstable release rather soon, and then a stable release still this year.

Back at LAS this year I talked about the Future of Flatpak and I started with the grim situation the project found itself in: Flatpak was stagnant, the maintainers left the project and PRs didn’t get reviewed.

Some good news: things are a bit better now. I have taken over maintenance, Alex Larsson and Owen Taylor managed to set aside enough time to make this happen and Boudhayan Bhattcharya (bbhtt) and Adrian Vovk also got more involved. The backlog has been reduced considerably and new PRs get reviewed in a reasonable time frame.

I also listed a number of improvements that we had planned, and we made progress on most of them:

Besides the changes directly in Flatpak, there are a lot of other things happening around the wider ecosystem:

What I have also talked about at my LAS talk is the idea of a Flatpak-Next project. People got excited about this, but I feel like I have to make something very clear:

If we redid Flatpak now, it would not be significantly better than the current Flatpak! You could still not do nested sandboxing, you would still need a D-Bus proxy, you would still have a complex permission system, and so on.

Those problems require work outside of Flatpak, but have to integrate with Flatpak and Flatpak-Next in the future. Some of the things we will be doing include:

So if you’re excited about Flatpak-Next, help us to improve the Flatpak ecosystem and make Flatpak-Next more feasible!

This was the first year I attended Kernel Recipes and I have nothing but say how much I enjoyed it and how grateful I’m for the opportunity to talk more about kworkflow to very experienced kernel developers. What I mostly like about Kernel Recipes is its intimate format, with only one track and many moments to get closer to experts and people that you commonly talk online during your whole year.

In the beginning of this year, I gave the talk Don’t let your motivation go, save time with kworkflow at FOSDEM, introducing kworkflow to a more diversified audience, with different levels of involvement in the Linux kernel development.

At this year’s Kernel Recipes I presented the second talk of the first day: Kworkflow - mix & match kernel recipes end-to-end.

The Kernel Recipes audience is a bit different from FOSDEM, with mostly long-term kernel developers, so I decided to just go directly to the point. I showed kworkflow being part of the daily life of a typical kernel developer from the local setup to install a custom kernel in different target machines to the point of sending and applying patches to/from the mailing list. In short, I showed how to mix and match kernel workflow recipes end-to-end.

As I was a bit fast when showing some features during my presentation, in this blog post I explain each slide from my speaker notes. You can see a summary of this presentation in the Kernel Recipe Live Blog Day 1: morning.

Hi, I’m Melissa Wen from Igalia. As we already started sharing kernel recipes and even more is coming in the next three days, in this presentation I’ll talk about kworkflow: a cookbook to mix & match kernel recipes end-to-end.

This is my first time attending Kernel Recipes, so lemme introduce myself briefly.

And what’s this cookbook called kworkflow?

Kworkflow is a tool created by Rodrigo Siqueira, my colleague at Igalia. It’s a single platform that combines software and tools to:

It’s mostly done by volunteers, kernel developers using their spare time. Its features cover real use cases according to kernel developer needs.

Basically it’s mixing and matching the daily life of a typical kernel developer with kernel workflow recipes with some secret sauces.

So, it’s time to start the first recipe: A good GPU driver for my AMD laptop.

Before starting any recipe we need to check the necessary ingredients and tools. So, let’s check what you have at home.

With kworkflow, you can use:

kw device: to get information about the target machine, such as: CPU model,

kernel version, distribution, GPU model,

kw remote: to set the address of this machine for remote access

kw config: you can configure kw with kw config. With this command you can

basically select the tools, flags and preferences that kw will use to build

and deploy a custom kernel in a target machine. You can also define recipients

of your patches when sending it using kw send-patch. I’ll explain more about

each feature later in this presentation.

kw kernel-config manager (or just kw k): to fetch the kernel .config file

from a given machine, store multiple .config files, list and retrieve them

according to your needs.

Now, with all ingredients and tools selected and well portioned, follow the right steps to prepare your custom kernel!

First step: Mix ingredients with kw build or just kw b

kw b and its options wrap many routines of compiling a custom kernel.

kw b -i to check the name and kernel version and the number

of modules that will be compiled and kw b --menu to change kernel

configurations.kw b to compile the custom kernel for a target

machine.Second step: Bake it with kw deploy or just kw d

After compiling the custom kernel, we want to install it in the target machine.

Check the name of the custom kernel built: 6.17.0-rc6 and with kw s SSH

access the target machine and see it’s running the kernel from the Debian

distribution 6.16.7+deb14-amd64.

As with building settings, you can also pre-configure some deployment settings, such as compression type, path to device tree binaries, target machine (remote, local, vm), if you want to reboot the target machine just after deploying your custom kernel, and if you want to boot in the custom kernel when restarting the system after deployment.

If you didn’t pre-configured some options, you can still customize as a command

option, for example: kw d --reboot will reboot the system after deployment,

even if I didn’t set this in my preference.

With just running kw d --reboot I have installed the kernel in a given target

machine and rebooted it. So when accessing the system again I can see it was

booted in my custom kernel.

Third step: Time to taste with kw debug

kw debug wraps many tools for validating a kernel in a target machine. We

can log basic dmesg info but also tracking events and ftrace.

kw debug --dmesg --history we can grab the full dmesg log from a

remote machine, if you use the --follow option, you will monitor dmesg

outputs. You can also run a command with kw debug --dmesg --cmd="<my

command>" and just collect the dmesg output related to this specific execution

period.kw drm

--gui-off to drop the graphical interface and release the amdgpu for

unloading it. So I run kw debug --dmesg --cmd="modprobe -r amdgpu" to unload

the amdgpu driver, but it fails and I couldn’t unload it.

Oh no! That custom kernel isn’t tasting good. Don’t worry, as in many recipes preparations, we can search on the internet to find suggestions on how to make it tasteful, alternative ingredients and other flavours according to your taste.

With kw patch-hub you can search on the lore kernel mailing list for possible

patches that can fix your kernel issue. You can navigate in the mailing lists,

check series, bookmark it if you find it relevant and apply it in your local

kernel tree, creating a different branch for tasting… oops, for testing. In

this example, I’m opening the amd-gfx mailing list where I can find

contributions related to the AMD GPU driver, bookmark and/or just apply the

series to my work tree and with kw bd I can compile & install the custom kernel

with this possible bug fix in one shot.

As I changed my kw config to reboot after deployment, I just need to wait for

the system to boot to try again unloading the amdgpu driver with kw debug

--dmesg --cm=modprobe -r amdgpu. From the dmesg output retrieved by kw for

this command, the driver was unloaded, the problem is fixed by this series and

the kernel tastes good now.

If I’m satisfied with the solution, I can even use kw patch-hub to access the

bookmarked series and marking the checkbox that will reply the patch thread

with a Reviewed-by tag for me.

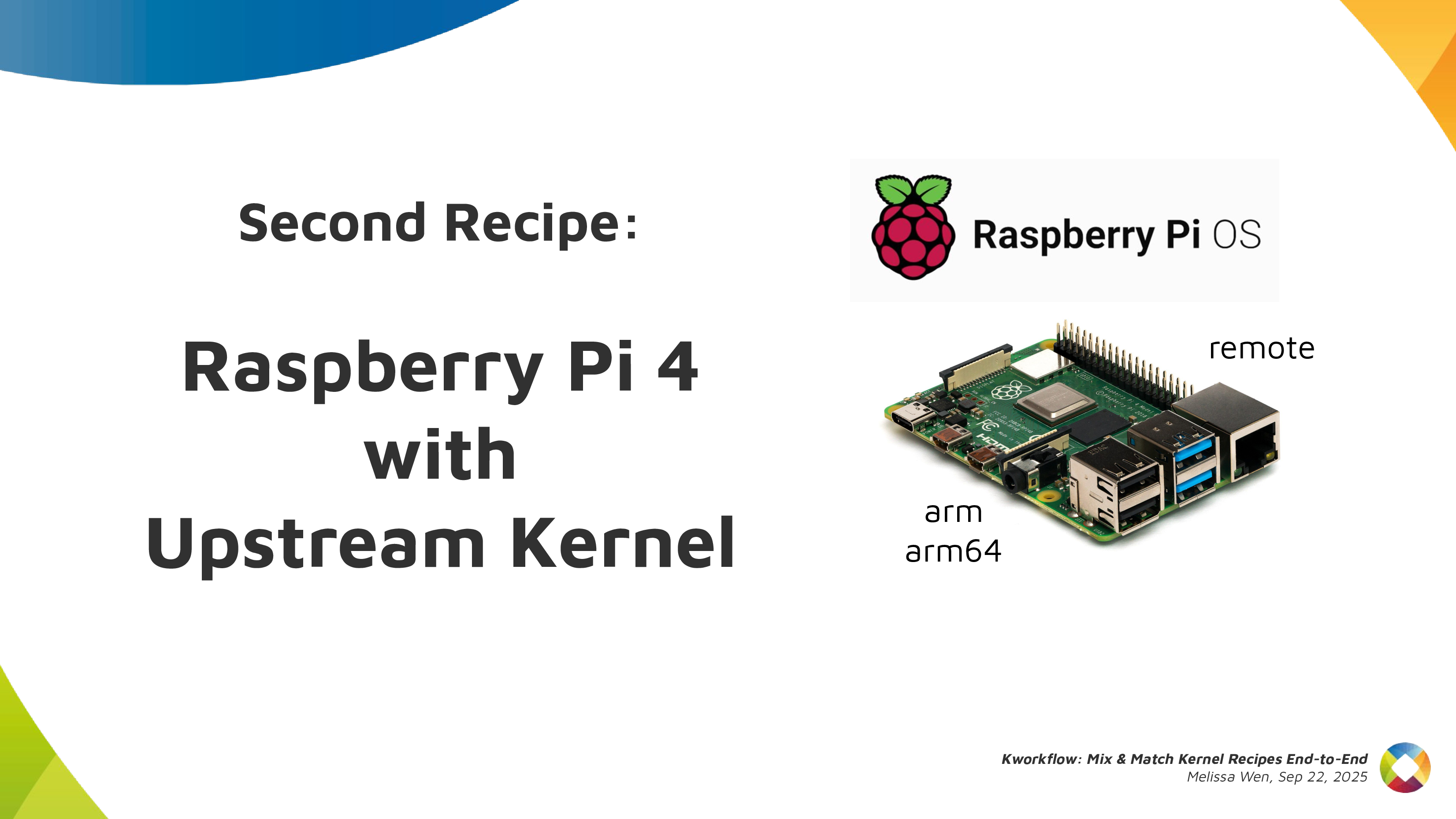

As in all recipes, we need ingredients and tools, but with kworkflow you can get everything set as when changing scenarios in a TV show. We can use kw env to change to a different environment with all kw and kernel configuration set and also with the latest compiled kernel cached.

I was preparing the first recipe for a x86 AMD laptop and with kw env --use

RPI_64 I use the same worktree but moved to a different kernel workflow, now

for Raspberry Pi 4 64 bits. The previous compiled kernel 6.17.0-rc6-mainline+

is there with 1266 modules, not the 6.17.0-rc6 kernel with 285 modules that I

just built&deployed. kw build settings are also different, now I’m targeting

a arm64 architecture with a cross-compiled kernel using aarch64-linu-gnu-

cross-compilation tool and my kernel image calls kernel8 now.

If you didn’t plan for this recipe in advance, don’t worry. You can create a

new environment with kw env --create RPI_64_V2 and run kw init --template

to start preparing your kernel recipe with the mirepoix ready.

I mean, with the basic ingredients already cut…

I mean, with the kw configuration set from a template.

And you can use kw remote to set the IP address of your target machine and

kw kernel-config-manager to fetch/retrieve the .config file from your target

machine. So just run kw bd to compile and install a upstream kernel for

Raspberry Pi 4.

Let’s show you how easy is to build, install and test a custom kernel for Steam Deck with Kworkflow. It’s a live demo, but I also recorded it because I know the risks I’m exposed to and something can go very wrong just because of reasons :)

For this live demo, I took my OLED Steam Deck to the stage. I explained that, if I boot mainline kernel on this device, there is no audio. So I turned it on and booted the mainline kernel I’ve installed beforehand. It was clear that there was no typical Steam Deck startup audio when the system was loaded.

As I started the demo in the kw environment for Raspberry Pi 4, I first moved to another environment previously used for Steam Deck. In this STEAMDECK environment, the mainline kernel was already compiled and cached, and all settings for accessing the target machine, compiling and installing a custom kernel were retrieved automatically.

My live demo followed these steps:

With kw env --use STEAMDECK, switch to a kworkflow environment for Steam

Deck kernel development.

With kw b -i, shows that kw will compile and install a kernel with 285

modules named 6.17.0-rc6-mainline-for-deck.

Run kw config to show that, in this environment, kw configuration changes

to x86 architecture and without cross-compilation.

Run kw device to display information about the Steam Deck device, i.e. the

target machine. It also proves that the remote access - user and IP - for

this Steam Deck was already configured when using the STEAMDECK environment, as

expected.

Using git am, as usual, apply a hot fix on top of the mainline kernel.

This hot fix makes the audio play again on Steam Deck.

With kw b, build the kernel with the audio change. It will be fast because

we are only compiling the affected files since everything was previously

done and cached. Compiled kernel, kw configuration and kernel configuration is

retrieved by just moving to the “STEAMDECK” environment.

Run kw d --force --reboot to deploy the new custom kernel to the target

machine. The --force option enables us to install the mainline kernel even

if mkinitcpio complains about missing support for downstream packages when

generating initramfs. The --reboot option makes the device reboot the Steam

Deck automatically, just after the deployment completion.

After finishing deployment, the Steam Deck will reboot on the new custom kernel version and made a clear resonant or vibrating sound. [Hopefully]

Finally, I showed to the audience that, if I wanted to send this patch

upstream, I just needed to run kw send-patch and kw would automatically add

subsystem maintainers, reviewers and mailing lists for the affected files as

recipients, and send the patch to the upstream community assessment. As I

didn’t want to create unnecessary noise, I just did a dry-run with kw

send-patch -s --simulate to explain how it looks.

In this presentation, I showed that kworkflow supported different kernel development workflows, i.e., multiple distributions, different bootloaders and architectures, different target machines, different debugging tools and automatize your kernel development routines best practices, from development environment setup and verifying a custom kernel in bare-metal to sending contributions upstream following the contributions-by-e-mail structure. I exemplified it with three different target machines: my ordinary x86 AMD laptop with Debian, Raspberry Pi 4 with arm64 Raspbian (cross-compilation) and the Steam Deck with SteamOS (x86 Arch-based OS). Besides those distributions, Kworkflow also supports Ubuntu, Fedora and PopOS.

Now it’s your turn: Do you have any secret recipes to share? Please share with us via kworkflow.

We’ve reached Q4 of another year, and after the mad scramble that has been crunch-time over the past few weeks, it’s time for SGC to once again retire into a deep, restful sleep.

2025 saw a lot of ground covered:

It’s been a real roller coaster ride of a year as always, but I can say authoritatively that fans of the blog, you need to take care of yourselves. You need to use this break time wisely. Rest. Recover. Train your bodies. Travel and broaden your horizons. Invest in night classes to expand your minds.

You are not prepared for the insanity that will be this blog in 2026.

Today marks the first post of a type that I’ve wanted to have for a long while: a guest post. There are lots of graphics developers who work on cool stuff and don’t want to waste time setting up blogs, but with enough cajoling they will write a single blog post. If you’re out there thinking you just did some awesome work and you want the world to know the grimy, gory details, let me know.

The first victimrecipient of this honor is an individual famous for small and extremely sane endeavors such as descriptor buffers in Lavapipe, ray tracing in Lavapipe, and sparse support in Lavapipe. Also wrangling ray tracing for RADV.

Below is the debut blog post by none other than Konstantin Seurer.

Apitrace is a powerful tool for capturing and replaying traces of GL and DX applications. The problem is that it is not really suitable for performance testing. This blog post is about implementing a faster method for replaying traces.

About six weeks ago, Mike asked me if I wanted to work on this.

[6:58:58 pm] <zmike> on the topic of traces

[6:59:08 pm] <zmike> I have a longer-term project that could use your expertise

[6:59:19 pm] <zmike> it's low work but high complexity

[7:00:12 pm] <zmike> specifically I would like apitrace to be able to competently output C code from traces and to have this functionality merged upstream

low work

glretraceThis first obvious step was measuring how glretrace currently performs. Mike kindly provided a couple of traces from his personal collection, and I immediately timed a trace of the only relevant OpenGL game:

$ time ./glretrace -b minecraft-perf.trace

/Users/Cortex/Downloads/graalvm-jdk-23.0.1+11.1/bin/java

Rendered 1261 frames in 10.4269 secs, average of 120.937 fps

real 0m10.554s

user 0m12.938s

sys 0m2.712s

This looks fine, but I have no idea how fast the application is supposed to run. Running the same trace with perf reveals that there is room for improvement.

2/3 of frametime is spent parsing the trace.

An apitrace trace stores API call information in an object-oriented style. This makes basic codegen really easy because the objects map directly to the generated C/C++ code. However, not all API calls are made equal, and the countless special cases that I needed to handle are what made this project take so long.

glretrace has custom implementations for WSI API calls, and it would be a shame not to use them. The easiest way of doing that is generating a shared library instead of an executable and having glretrace load it. The shared library can then provide a bunch of callbacks for the call sequences we can do codegen for and Call objects for everything else.

Besides WSI, there are also arguments and return values that need special treatment. OpenGL allows the application to create all kinds of objects that are represented using IDs. Those IDs are assigned by the driver, and they can be different during replay. glretrace remaps them using std::maps which have non-trivial overhead. I initially did that as well for the codegen to get things up and running, but it is actually possible to emit global variables and have most of the remapping run logic during codegen.

With the main replay overhead being taken care of, a major amount of replay time is now spent loading texture and buffer data. In large traces, there can also be >10GiB of data, so loading everything upfront is not an option. I decided to create one thread for reading the data file and nproc decompression threads. The read thread will wait if enough data has been loaded to limit memory usage. Decompression threads are needed because decompression is slower than reading the compressed data.

The results speak for themselves:

$ ./glretrace --generate-c minecraft-perf minecraft-perf.trace

/Users/Cortex/Downloads/graalvm-jdk-23.0.1+11.1/bin/java

Rendered 0 frames in 79.4072 secs, average of 0 fps

$ cd minecraft-perf

$ echo "Invoke the superior build tool"

$ meson build --buildtype release

$ ninja -Cbuild

$ time ../glretrace build/minecraft-perf.so

info: Opening 'minecraft-perf.so'... (0.00668795 secs)

warning: Waited 0.0461142 secs for data (sequence = 19)

Rendered 1261 frames in 5.19587 secs, average of 242.693 fps

real 0m5.415s

user 0m5.429s

sys 0m4.983s

Nice.

Looking at perf most CPU time is now spent in driver code or streaming binary data for stuff like textures on a separate thread.

If you are interested in trying this out yourself, feel free to build the upstream PR and report on bugs unintended features. It would also be nice to have DX support in the future, but that will be something for the dxvk developers unless I need something to procrastinate from doing RT work.

- Konstantin

Hi!

I skipped last month’s status update because I hadn’t collected a lot of interesting updates and I’ve dedicated my time to writing an announcement for the first vali release.

Earlier this month, I’ve taken the train to Vienna to attend XDC 2025. The conference was great, I really enjoyed discussing face-to-face with open-source graphics folks I usually only interact with online, and meeting new awesome people! Since I’m part of the X.Org Foundation board, it was also nice to see the fruit of our efforts. Many thanks to all organizers!

We’ve discussed many interesting topics: a new API for 2D acceleration hardware, adapting the Wayland linux-dmabuf protocol to better support multiple GPUs, some ways to address current Wayback limitations, ideas to improve libliftoff, Vulkan use in Wayland compositors, and a lot more.

On the wlroots side, I’ve worked on a patch to fallback to the renderer to apply gamma LUTs when the KMS driver doesn’t support them (this also paves the way for applying color transforms in KMS). Félix Poisot has updated wlroots to support the gamma 2.2 transfer function and use it by default. llyyr has added support for the BT.1886 transfer function and fixed direct scanout for client using the gamma 2.2 transfer function.

I’ve sent a patch to add support for DisplayID v2 CTA-861 data blocks, required for handling some HDR screens. I’ve reviewed and merged a bunch of gamescope patches to avoid protocol errors with the color management protocol, fix nested mode under a Vulkan compositor, fix a crash on VT switch and modernize dependencies.

I’ve worked a bit on drm_info too. I’ve added a JSON schema to describe the shape of the JSON objects, made it so EDIDs are included in the JSON output as base64-encoded strings, and added the EDID make/model/serial + bus info to the pretty-printed output.

delthas has added soju support for user metadata, introduced a new work-in-progress metadata key to block users, and made it so soju cancels Web Push notifications if a client marks a message as read (to avoid opening notifications for a very short time when actively chatting with another user). Markus Cisler has revamped Goguma’s message bubbles: they look much better now!

See you next month!

A while ago I wrote about the limited usefulness of SO_PEERPIDFD. for authenticating sandboxed applications. The core problem was simple: while pidfds gave us a race-free way to identify a process, we still had no standardized way to figure out what that process actually was - which sandbox it ran in, what application it represented, or what permissions it should have.

The situation has improved considerably since then.

Cgroups now support user extended attributes. This feature allows arbitrary metadata to be attached to cgroup inodes using standard xattr calls.

We can change flatpak (or snap, or any other container engine) to create a cgroup for application instances it launches, and attach metadata to it using xattrs. This metadata can include the sandboxing engine, application ID, instance ID, and any other information the compositor or D-Bus service might need.

Every process belongs to a cgroup, and you can query which cgroup a process belongs to through its pidfd - completely race-free.

Remember the complexity from the original post? Services had to implement different lookup mechanisms for different sandbox technologies:

/proc/$PID/root/.flatpak-infosnap routine portal-infoAll of this goes away. Now there’s a single path:

SO_PEERPIDFD to get a pidfd for the clientThis works the same way regardless of which sandbox engine launched the application.

It’s worth emphasizing: cgroups are a Linux kernel feature. They have no dependency on systemd or any other userspace component. Any process can manage cgroups and attach xattrs to them. The process only needs appropriate permissions and is restricted to a subtree determined by the cgroup namespace it is in. This makes the approach universally applicable across different init systems and distributions.

To support non-Linux systems, we might even be able to abstract away the cgroup details, by providing a varlink service to register and query running applications. On Linux, this service would use cgroups and xattrs internally.

The old approach - creating dedicated wayland, D-Bus, etc. sockets for each app instance and attaching metadata to the service which gets mapped to connections on that socket - can now be retired. The pidfd + cgroup xattr approach is simpler: one standardized lookup path instead of mounting special sockets. It works everywhere: any service can authenticate any client without special socket setup. And it’s more flexible: metadata can be updated after process creation if needed.

For compositor and D-Bus service developers, this means you can finally implement proper sandboxed client authentication without needing to understand the internals of every container engine. For sandbox developers, it means you have a standardized way to communicate application identity without implementing custom socket mounting schemes.

Just a quick post to confirm that the OpenGL/ES Working Group has signed off on the release of GL_EXT_mesh_shader.

This is a monumental release, the largest extension shipped for GL this decade, and the culmination of many, many months of work by AMD. In particular we all need to thank Qiang Yu (AMD), who spearheaded this initiative and did the vast majority of the work both in writing the specification and doing the core mesa implementation. Shihao Wang (AMD) took on the difficult task of writing actual CTS cases (not mandatory for EXT extensions in GL, so this is a huge benefit to the ecosystem).

Big thanks to both of you, and everyone else behind the scenes at AMD, for making this happen.

Also we have to thank the nvidium project and its author, Cortex, for single-handedly pushing the industry forward through the power of Minecraft modding. Stay sane out there.

Minecraft mod support is already underway, so expect that to happen “soon”.

The bones of this extension have already been merged into mesa over the past couple months. I opened a MR to enable zink support this morning since I have already merged the implementation.

Currently, I’m planning to wait until either just before the branch point next week or until RadeonSI merges its support to merge the zink MR. This is out of respect: Qiang Yu did a huge lift for everyone here, and ideally AMD’s driver should be the first to be able to advertise that extension to reflect that. But the branchpoint is coming up in a week, and SGC will be going into hibernation at the end of the month until 2026, so this offer does have an expiration date.

In any case, we’re done here.

In the past months I’ve been working on vali, a C library for Varlink. Today I’m publishing the first vali release! I’d like to explain how to use it for readers who aren’t especially familiar with Varlink, and describe some interesting API design decisions.

Varlink is a very simple Remote Procedure Call (RPC) protocol. Clients can call methods exposed by services (ie, servers). To call a method, a client sends a JSON object with its name and parameters over a Unix socket. To reply to a call, a service sends a JSON object with response parameters. That’s it.

Here’s an example request with a bar parameter containing an integer:

{

"method": "org.example.service.Foo",

"parameters": {

"bar": 42

}

}

And here’s an example response with a baz parameter containing a list of

strings:

{

"parameters": {

"baz": ["hello", "world"]

}

}

Varlink also supports calls with no reply or with multiple replies, but let’s leave this out of the picture for simplicity’s sake.

Varlink services can describe the methods they implement with an interface definition file.

method Foo(bar: int) -> (baz: []string)

Coming from the Wayland world, I love generating code from specification files. This removes all of the manual encoding/decoding boilerplate and is more type-safe. Unfortunately the official libvarlink library doesn’t support code generation (and is not actively maintained anymore), so I’ve decided to write my own. vali is the result!

To better understand the benefits of code generation and vali design decisions, let’s take a minute to have a look at what usage without code generation looks like.

A client first needs to connect via vali_client_connect_unix(), then call

vali_client_call() with a JSON object containing input parameters. It’ll

get back a JSON object containing output parameters, which needs to be parsed.

struct vali_client *client = vali_client_connect_unix("/run/org.example.service");

if (client == NULL) {

fprintf(stderr, "Failed to connect to service\n");

exit(1);

}

struct json_object *in = json_object_new_object();

json_object_object_add(in, "bar", json_object_new_int(42));

struct json_object *out = NULL;

if (!vali_client_call(client, "org.example.service.Foo", in, &out, NULL)) {

fprintf(stderr, "Foo request failed\n");

exit(1);

}

struct json_object *baz = json_object_object_get(out, "baz");

for (size_t i = 0; i < json_object_array_length(baz); i++) {

struct json_object *item = json_object_array_get_idx(baz, i);

printf("%s\n", json_object_get_string(item));

}

This is a fair amount of boilerplate. In case of a type mismatch, the client will silently print nothing, which isn’t ideal.

The last parameter of vali_client_call() is an optional struct vali_error *:

if set to a non-NULL pointer and the service replies with an error, the struct

is populated, otherwise it’s zero’ed out:

struct vali_error err;

if (!vali_client_call(client, "org.example.service.Foo", in, &out, &err)) {

if (err.name != NULL) {

fprintf(stderr, "Foo request failed: %s\n", err.name);

} else {

fprintf(stderr, "Foo request failed: internal error\n");

}

vali_error_finish(&err);

exit(1);

}

How does the service side look like? A service first calls

vali_service_create() to initialize a fresh service, defines a callback

to be invoked when a Varlink call is performed by a client via

vali_service_set_call_handler(), and sets up a Unix socket via

vali_service_listen_unix(). Let’s demonstrate how a service accesses a

shared state by printing the number of calls done so far when the callback is

invoked. The callback needs to end the call with

vali_service_call_close_with_reply().