If you’ve ever looked at a GPU render and seen blue where red should be, you’ve met the R/B swap problem. For etnaviv this has been a long-standing source of complexity. We were solving it in the shader, but the proprietary blob driver had a simpler approach all along. As part of my work at Igalia, I finally sat down and did it properly.

Vivante GPUs have a quirk: the Pixel Engine (PE) always writes pixels in BGRA byte order. When your API says “render to R8G8B8A8_UNORM”, what actually lands in memory is B, G, R, A. Every byte of every pixel, every frame. The hardware just works that way.

As part of Igalia’s collaboration with Raspberry Pi, I have previously blogged about several improvements we landed for the Broadcom VideoCore GPU (known as V3D), with the goal of extracting the best possible performance from the hardware. However, performance is not the whole story. On embedded devices, power consumption is just as important: reducing unnecessary activity helps lower heat generation, improve energy efficiency, and preserve performance over time by avoiding thermal throttling.

That is why, over the last few months, we have been working on adding Runtime Power Management support to the upstream V3D DRM driver, allowing the GPU to be powered and clocked according to its actual usage.

In the Linux kernel, Runtime Power Management (known as Runtime PM) is the mechanism that allows individual devices to be suspended and resumed dynamically while the system as a whole remains running. Instead of keeping a device fully powered all the time, the kernel can put the device into a low-power state when it is idle and bring it back when it is needed again.

In the graphics context, it is easy to see why runtime PM can be useful. A GPU is not necessarily active all the time: it may be heavily used while rendering a scene, but remain idle for long periods afterwards. If the driver keeps the GPU clocked during those idle periods, the system keeps spending energy on a block that is not doing useful work at all.

For embedded platforms, this is even more pressing. Reducing unnecessary power consumption helps decrease heat generation and improve overall energy efficiency. Even if the board is not battery-powered, avoiding needless power usage can reduce the need for cooling and leave more thermal budget available for other blocks.

Until now, the V3D driver had a very simple power model: the GPU clock was enabled during probe and remained enabled for the entire lifetime of the driver. In practice, this meant that once the driver was loaded, the V3D clock stayed on until the driver was removed, regardless of whether the GPU was actively executing jobs. This was simple and functional, but it meant that an idle GPU was not idle from a power-management point of view.

On Raspberry Pi platforms, this is easy to observe with vcgencmd. Even with no GPU workload running, the V3D clock would still report an enabled frequency:

$ vcgencmd measure_clock v3d

frequency(0)=960016128

If the GPU is idle, the driver should be able to let the hardware become idle as well. Runtime PM provides the kernel infrastructure for that, but enabling it in the V3D driver required a bit more than simply adding suspend and resume callbacks.

At first glance, adding Runtime PM to V3D might look like a driver-local change, but in practice, things were a bit more subtle.

On Raspberry Pi platforms, some clocks are managed by the Raspberry Pi firmware. From the V3D driver’s point of view, this is supposed to be mostly transparent: the driver uses the standard Linux clock framework, and the clock provider takes care of talking to the firmware underneath. However, this abstraction only works if calls to clk_prepare_enable() and clk_disable_unprepare() are translated into actual firmware requests to enable and disable the clock.

Surprisingly, that was not happening. The Raspberry Pi firmware clock driver did not implement the prepare/unprepare hooks, so these calls did not actually ask the firmware to enable or disable the clock. We fixed that by translating the common clock framework operations into the corresponding Raspberry Pi firmware commands [1][2][3].

However, there was still one firmware-specific caveat: on current firmware versions, RPI_FIRMWARE_SET_CLOCK_STATE does not fully power off the clock as expected. To work around this limitation and achieve meaningful power savings, the clock rate also needs to be set to the minimum before disabling the clock. This behavior may change in future firmware releases, but for now the clock driver needs to account for it explicitly.

With the firmware clock limitation addressed, the V3D driver could start relying on the usual kernel clock APIs as part of its Runtime PM flow. The next step was to reorganize the driver so that powering the GPU up and down became part of its operation.

With the clock side behaving as expected, we could move the V3D driver itself to a Runtime PM model [7][8][9].

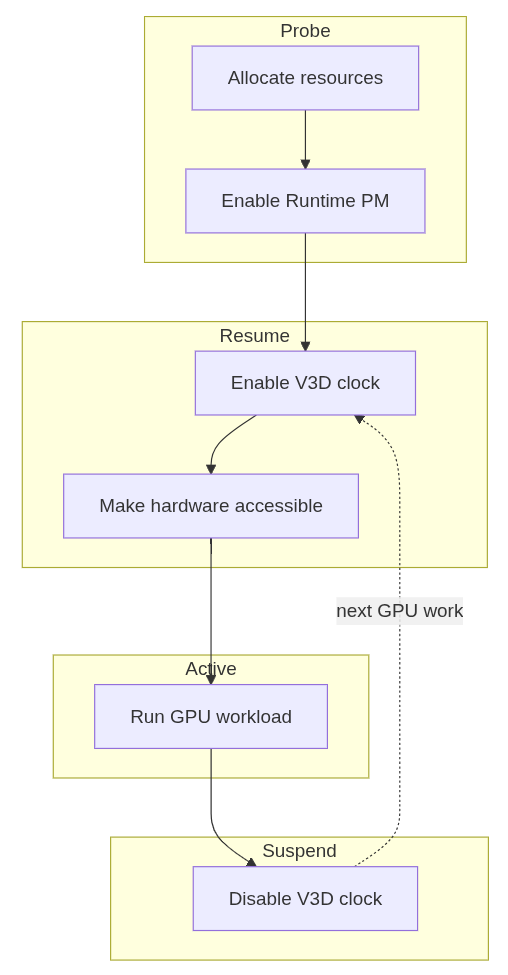

This required a small refactor of the probe path to separate power-independent setup from GPU-powered initialization. Resources that do not require the GPU to be powered are allocated during probe, while any initialization that depends on the GPU being clocked is handled during runtime resume. Runtime suspend then disables the clock again when the device becomes idle. The resulting flow is simple:

With that in place, the change becomes visible from userspace. While a GPU workload such as glmark2 is running, the V3D clock is enabled:

$ vcgencmd measure_clock v3d

frequency(0)=960016128

After the workload finishes and the GPU becomes idle, the clock can drop back to zero:

$ vcgencmd measure_clock v3d

frequency(0)=0

This is the behavior we wanted: the GPU remains available when there is work to do, but it no longer keeps its clock enabled while idle.

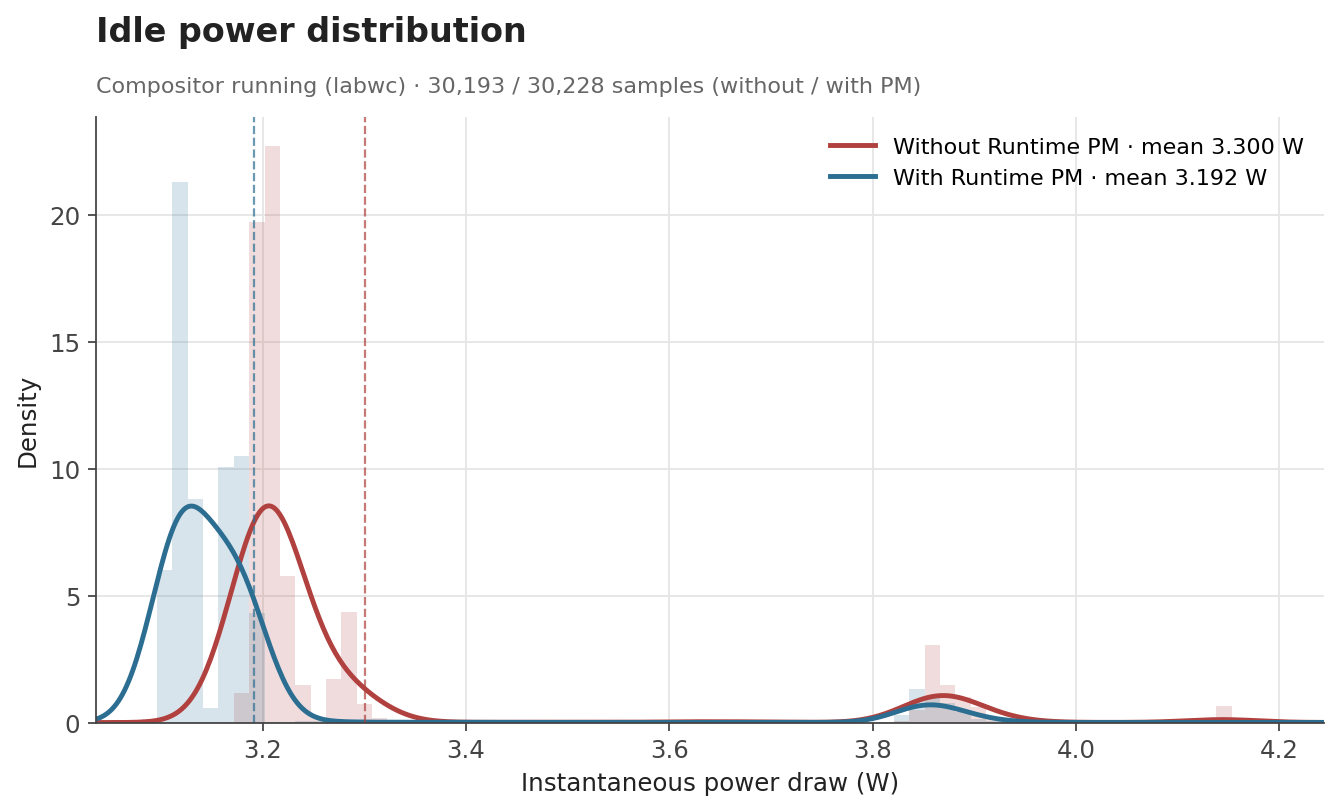

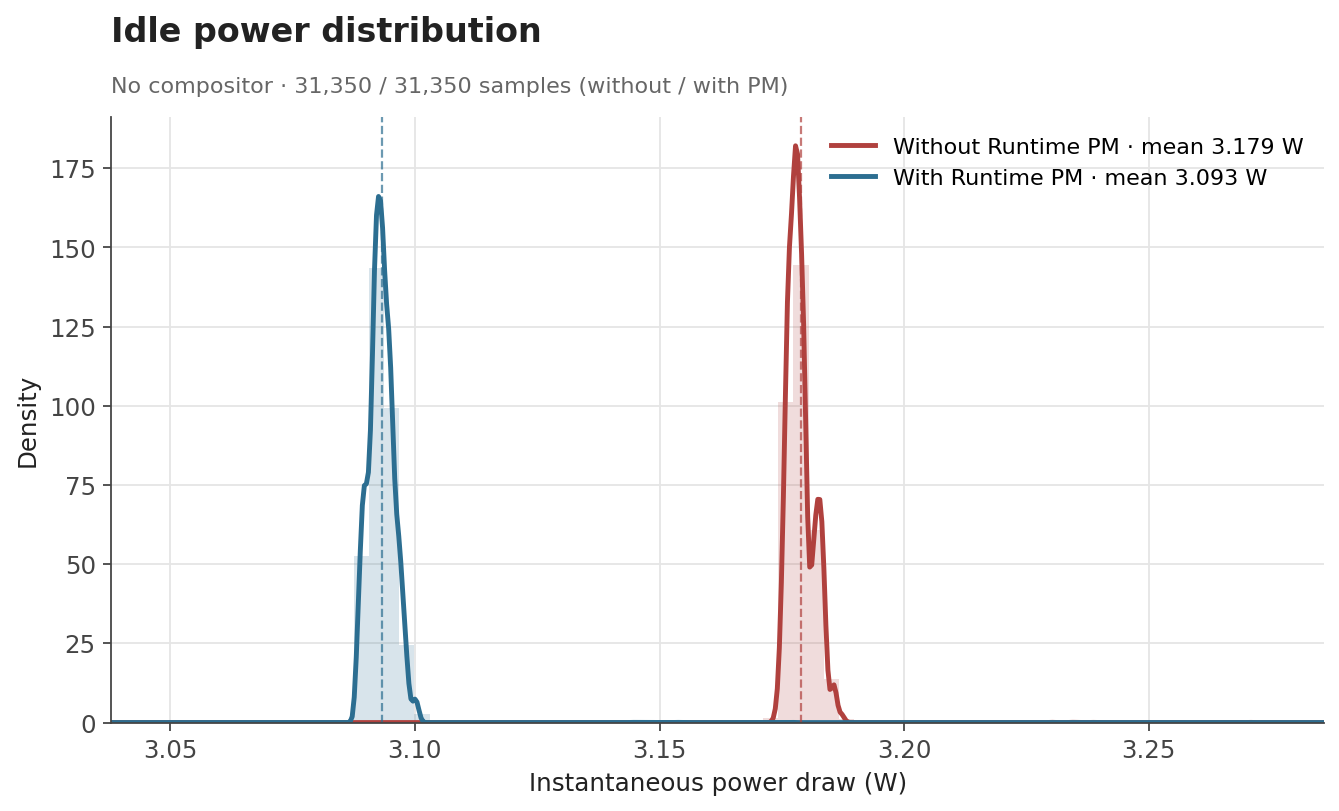

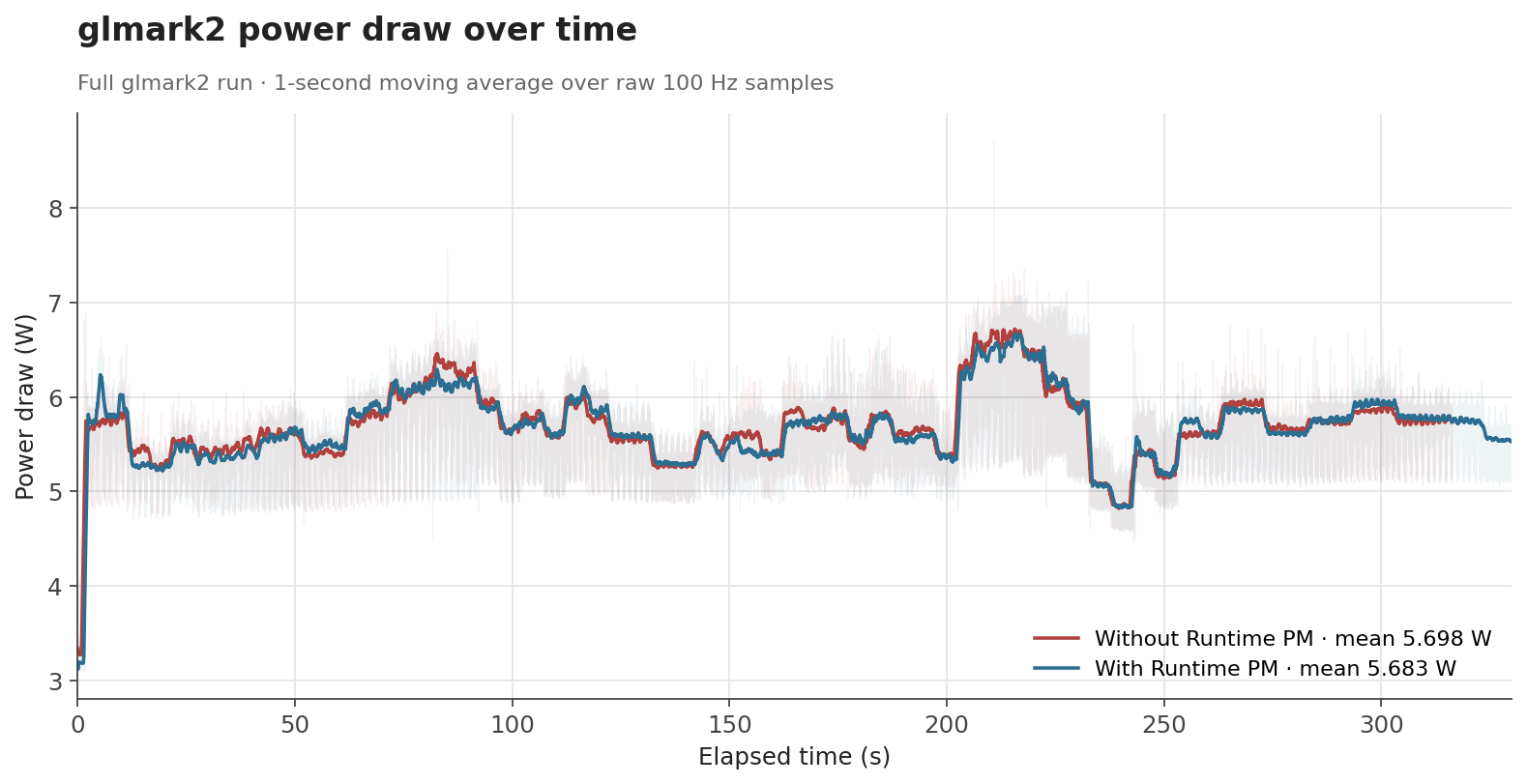

To evaluate the effect of Runtime PM, we measured the board’s power consumption with an external power meter in three scenarios: an idle desktop session with labwc running, an idle system without the compositor, and a full glmark2 run. Each condition was sampled at 100 Hz for around 300 seconds.

The first case represents a mostly idle graphical session, where labwc, the compositor used by Raspberry Pi OS, may still wake the GPU occasionally. The second is a baseline with no graphical workload, while the third is a sustained GPU benchmark intended to keep the GPU active.

The numbers behave the way one would hope. When the GPU is genuinely idle, the clock can be gated off and the savings show up as a clear drop: average draw falls from 3.30 W to 3.19 W with labwc running, and from 3.18 W to 3.09 W with no compositor at all. Both idle scenarios end up with savings of about 0.1 W (around 3%). Under glmark2, where the GPU is doing useful work for most of the run, the difference shrinks to about 0.015 W (0.3%), which is expected, as Runtime PM mainly affects the periods where the GPU becomes idle.

| Scenario | Before | After | Difference |

|---|---|---|---|

| Idle, compositor running | 3.300 W | 3.192 W | -0.108 W (-3.3%) |

| Idle, no compositor | 3.179 W | 3.093 W | -0.086 W (-2.7%) |

glmark2 full run |

5.698 W | 5.683 W | -0.015 W (-0.3%) |

The distribution of idle samples with labwc running also shows the effect clearly. With Runtime PM enabled, the distribution shifts toward lower power states. This indicates that the board spends more time in lower-power idle states once the V3D clock is no longer kept enabled unnecessarily.

The effect is even cleaner with no compositor running. The samples collapse into two very narrow peaks with no overlap between them: without Runtime PM, the board sits at a stable 3.18 W; with Runtime PM, it sits at a stable 3.09 W.

For glmark2, the time-series data shows that both configurations follow the same general workload pattern. Runtime PM does not significantly change the power profile while the GPU is busy, which is the intended behavior. The benefit appears when the workload leaves idle gaps or finishes, allowing the clock to be disabled again.

Overall, these measurements show that Runtime PM reduces power consumption where it matters most: when the GPU is idle. The absolute savings are modest at the board level, since the measurement includes the whole Raspberry Pi rather than the GPU power block alone, but the reduction is consistent with the intended change. The V3D clock no longer remains enabled for the full lifetime of the driver, and that translates into measurable reductions in idle power consumption.

Runtime PM support for V3D is one of those changes that is easy to overlook when everything is working correctly: userspace does not need to do anything differently, applications keep using the GPU as before, and the improvement happens underneath, in the way the kernel manages the hardware.

Beyond improving raw GPU performance, our work at Igalia is also about making the upstream graphics stack behave better as a system: more efficient when idle, more robust across firmware interfaces, and better aligned with the expectations of the Linux kernel infrastructure.

[2] clk: bcm: rpi: Maximize V3D clock - kernel/git/torvalds/linux.git - Linux kernel source tree

Software Engineering Radio is a podcast for people in IT/development with over 700 episodes across many topics over 20 years. They haven't touched on the Linux kernel much. I was invited on as part of my role at Red Hat as a Distinguished Engineer, but the podcast is really an insight into kernel maintenance, in graphics and beyond, touching on the scope and scale of the project.

It was my first time to record something that wasn't just me talking at a conference/meetup, and it was all very professional, with sound checks and brainstorming before hand.

The content is at a pretty broad and introductory level. We talked about kernel development processes, maintenance processes, and we touch on rust in the kernel a bit. It's mostly about the sheer size and scale of the project and how Linus releases things, how trees get to Linus and how the GPU work is done.

Hopefully you enjoy listening to it!

[1] https://se-radio.net/2026/06/se-radio-723-dave-airlie-on-linux-kernel-maintenance/

Back when we started with a signed shim in Debian, the tooling was Windows-only and required me to do a reboot dance and it was all quite tedious. Over time, more and more of the tooling has migrated to Linux and it all works quite well.

The signing is done with an EV code signing cert from SSL.com and stored on a Yubikey. Getting the certificate onto the key is a bit tedious, but reasonably well-explained in the ssl.com docs.

Microsoft wants the shim binaries uploaded to their partner portal

wrapped in a .cab file, which should be signed.

The wrapping in a .cab file is easy enough: lcab shim.efi shim-unsigned.cab. It’s fine to put shims for multiple architectures

in the same .cab file.

Signing of the file is a little bit of a rune:

osslsigncode sign -pkcs11module /usr/lib/x86_64-linux-gnu/libykcs11.so -key "pkcs11:serial=XXX" -askpass -certs chain.crt -h sha256 -ts http://ts.ssl.com shim-unsigned.cab shim-unsigned.signed.cab

chain.crt contains first our EV code signing cert, then the ssl.com

intermediate EV code signing cert, then the ssl.com EV root cert. The

naming of the packages is a tiny bit confusing, but it’s because the

package name in Debian is shim-unsigned.

Occasionally, processing of uploaded binaries just stops in the validation stage in the portal, but I’ve so far been able to unstuck them by re-signing and uploading again, and I saw the same with the MS/Windows toolchain, so I suspect it’s just flakiness on the portal side.

It’s a question I had to ask myself multiple times over the last few months. Depending on the context the answer can be:

If you are an app developer, you’re lucky and it’s almost always the first answer. If you develop something with a security boundary which involves files in any way, the correct answer is very likely the second one.

Like so often, the details depend on the specifics, but in the worst-case scenario, there is a process on either side of the security boundary, which operate on a filesystem tree which is shared by both processes.

Let’s say that the process with more privileges operates on a file on behalf of the process with less privileges. You might want to restrict this to files in a certain directory, to prevent the less privileged process from, for example, stealing your SSH key, and thus take a subpath that is relative to that directory.

The first obvious problem is that the subpath can refer to files outside of the directory if it contains ... If the privileged process gets called with a subpath of ../.ssh/id_ed25519, you are in trouble. Easy fix: normalize the path, and if we ever go outside of the directory, fail.

The next issue is that every component of the path might be a symlink. If the privileged process gets called with a subpath of link, and link is a symlink to ../.ssh/id_ed25519, you might be in trouble. If the process with less privileges cannot create files in that part of the tree, it cannot create a malicious symlink, and everything is fine. In all other scenarios, nothing is fine. Easy fix: resolve the symlinks, expand the path, then normalize it.

This is usually where most people think we’re done, opening a file is not that hard after all, we can all do more fun things now. Really, this is where the fun begins.

The fix above works, as long as the less privileged process cannot change the file system tree anywhere in the file’s path while the more privileged process tries to access it. Usually this is the case if you unpack an attacker-provided archive into a directory the attacker does not have access to. If it can however, we have a classic TOCTOU (time-of-check to time-of-use) race.

We have the path foo/id_ed25519, we resolve the smlinks, we expand the path, we normalize it, and while we did all of that, the other process just replaced the regular directory foo that we just checked with a symlink which points to ../.ssh. We just checked that the path resolves to a path inside the target directory though, and happily open the path foo/id_ed25519 which now points to your ssh key. Not an easy fix.

So, what is the fundamental issue here? A path string like /home/user/.local/share/flatpak/app/org.example.App/deploy describes a location in a filesystem namespace. It is not a reference to a file. By the time you finish speaking the path aloud, the thing it names may have changed.

The safe primitive is the file descriptor. Once you have an fd pointing at an inode, the kernel pins that inode. The directory can be unlinked, renamed, or replaced with a symlink; the fd does not care. A common misconception is that file descriptors represent open files. It is true that they can do that, but fds opened with O_PATH do not require opening the file, but still provide a stable reference to an inode.

The lesson that should be learned here is that you should not call any privileged process with a path. Period. Passing in file descriptors also has the benefit that they serve as proof that the calling process actually has access to the resource.

Another important lesson is that dropping down from a file descriptor to a path makes everything racy again. For example, let’s say that we want to bind mount something based on a file descriptor, and we only have the traditional mount API, so we convert the fd to a path, and pass that to mount. Unfortunately for the user, the kernel resolves the symlinks in the path that an attacker might have managed to place there. Sometimes it’s possible to detect the issue after the fact, for example by checking that the inode and device of the mounted file and the file descriptor match.

With that being said, sometimes it is not entirely avoidable to use paths, so let’s also look into that as well!

In the scenario above, we have a directory in which we want all the paths to resolve in, and that the attacker does not control. We can thus open it with O_PATH and get a file descriptor for it without the attacker being able to redirect it somewhere else.

With the openat syscall, we can open a path relative to the fd we just opened. It has all the same issues we discussed above, except that we can also pass O_NOFOLLOW. With that flag set, if the last segment of the path is a symlink, it does not follow it and instead opens the actual symlink inode. All the other components can still be symlinks, and they still will be followed. We can however just split up the path, and open the next file descriptor for the next path segment and resolve symlinks manually until we have done so for the entire path.

libglnx is a utility library for GNOME C projects that provides fd-based filesystem operations as its primary API. Functions like glnx_openat_rdonly, glnx_file_replace_contents_at, and glnx_tmpfile_link_at all take directory fds and operate relative to them. The library is built around the discipline of “always have an fd, never use an absolute path when you can use an fd.”

The most recent addition is glnx_chaseat, which provides safe path traversal, and was inspired by systemd’s chase(), and does precisely what was described above.

int glnx_chaseat (int dirfd,

const char *path,

GlnxChaseFlags flags,

GError **error);

It returns an O_PATH | O_CLOEXEC fd for the resolved path, or -1 on error. The real magic is in the flags:

typedef enum _GlnxChaseFlags {

/* Default */

GLNX_CHASE_DEFAULT = 0,

/* Disable triggering of automounts */

GLNX_CHASE_NO_AUTOMOUNT = 1 << 1,

/* Do not follow the path's right-most component. When the path's right-most

* component refers to symlink, return O_PATH fd of the symlink. */

GLNX_CHASE_NOFOLLOW = 1 << 2,

/* Do not permit the path resolution to succeed if any component of the

* resolution is not a descendant of the directory indicated by dirfd. */

GLNX_CHASE_RESOLVE_BENEATH = 1 << 3,

/* Symlinks are resolved relative to the given dirfd instead of root. */

GLNX_CHASE_RESOLVE_IN_ROOT = 1 << 4,

/* Fail if any symlink is encountered. */

GLNX_CHASE_RESOLVE_NO_SYMLINKS = 1 << 5,

/* Fail if the path's right-most component is not a regular file */

GLNX_CHASE_MUST_BE_REGULAR = 1 << 6,

/* Fail if the path's right-most component is not a directory */

GLNX_CHASE_MUST_BE_DIRECTORY = 1 << 7,

/* Fail if the path's right-most component is not a socket */

GLNX_CHASE_MUST_BE_SOCKET = 1 << 8,

} GlnxChaseFlags;

While it doesn’t sound too complicated to implement, a lot of details are quite hairy. The implementation uses openat2, open_tree and openat depending on what is available and what behavior was requested, it handles auto-mount behavior, ensures that previously visited paths have not changed, and a few other things.

The POSIX APIs are not great at dealing with the issue. The GLib/Gio APIs (GFile, etc.) are even worse and only accept paths. Granted, they also serve as a cross-platform abstraction where file descriptors are not a universal concept. Unfortunately, Rust also has this cross-platform abstraction which is based entirely on paths.

If you use any of those APIs, you very likely created a vulnerability. The deeper issue is that those path-based APIs are often the standard way to interact with files. This makes it impossible to reason about the security of composed code. You can audit your own code meticulously, open everything with O_PATH | O_NOFOLLOW, chain *at() calls carefully — and then call a third-party library that calls open(path) internally. The security property you established in your code does not compose through that library call.

This means that any system-level code that cares about filesystem security has to audit all transitive dependencies or avoid them in the first place.

So what would a better GLib cross-platform API look like? I would say not too different from chaseat(), but returning opaque handles instead of file descriptors, which on Unix would carry the O_PATH file descriptor and a path that can be used for printing, debugging and things like that. You would open files from those handles, which would yield another kind of opaque handle for reading, writing, and so on.

The current GFile was also designed to implement GVfs: g_file_new_for_uri("smb://server/share/file") gives you a GFile you can g_file_read() just like a local file. This is the right goal, but the wrong abstraction layer. Instead, this kind of access should be provided by FUSE, and the URI should be translated to a path on a specific FUSE mount. This would provide a few benefits:

Nowadays I maintain a small project called Flatpak. Codean Labs recently did a security analysis on it and found a number of issues. Even though Flatpak developers were aware of the dangers of filesystems, and created libglnx because of it, most of the discovered issues were just about that. One of them (CVE-2026-34078) was a complete sandbox escape.

flatpak run was designed as a command-line tool for trusted users. When you type flatpak run org.example.App, you control the arguments. The code that processes the arguments was written assuming the caller is legitimate. It accepted path strings, because that’s what command-line tools accept.

The Flatpak portal was then built as a D-Bus service that sandboxed apps could call to start subsandboxes — and it did this by effectively constructing a flatpak run invocation and executing it. This connected a component designed for trusted input directly to an untrusted caller (the sandboxed app).

Once that connection exists, every assumption baked into flatpak run about caller trustworthiness becomes a potential vulnerability. The fix wasn’t “change one function” — it was “audit the entire call chain from portal request to bubblewrap execution and replace every path string with an fd.” That’s commits touching the portal, flatpak-run, flatpak_run_app, flatpak_run_setup_base_argv, and the bwrap argument construction, plus new options (--app-fd, --usr-fd, --bind-fd, --ro-bind-fd) threaded through all of them.

If the GLib standard file and path APIs were secure, we would not have had this issue.

Another annoyance here is that the entire subsandboxing approach in Flatpak comes from 15 years ago, when unprivileged user namespaces were not common. Nowadays we could (and should) let apps use kernel-native unprivileged user namespaces to create their own subsandboxes.

Unfortunately with rather large changes comes a high likelihood of something going wrong. For a few days we scrambled to fix a few regressions that prevented Steam, WebKit, and Chromium-based apps from launching. Huge thanks to Simon McVittie!

In the end, we managed to fix everything, made Flatpak more secure, the ecosystem is now better equipped to handle this class of issues, and hopefully you learned something as well.

If you have recently installed a very up-to-date Linux distribution with a desktop environment, or upgraded your system on a rolling-release distribution, you might have noticed that your home directory has a new folder: “Projects”

With the recent 0.20 release of xdg-user-dirs we enabled the “Projects” directory by default. Support for this has already existed since 2007, but was never formally enabled. This closes a more than 11 year old bug report that asked for this feature.

The purpose of the Projects directory is to give applications a default location to place project files that do not cleanly belong into one of the existing categories (Documents, Music, Pictures, Videos). Examples of this are software engineering projects, scientific projects, 3D printing projects, CAD design or even things like video editing projects, where project files would end up in the “Projects” directory, with output video being more at home in “Videos”.

By enabling this by default, and subsequently in the coming months adding support to GLib, Flatpak, desktops and applications that want to make use of it, we hope to give applications that do operate in a “project-centric” manner with mixed media a better default storage location. As of now, those tools either default to the home directory, or will clutter the “Documents” folder, both of which is not ideal. It also gives users a default organization structure, hopefully leading to less clutter overall and better storage layouts.

As usual, you are in control and can modify your system’s behavior. If you do not like the “Projects” folder, simply delete it! The xdg-user-dirs utility will not try to create it again, and instead adjust the default location for this directory to your home directory. If you want more control, you can influence exactly what goes where by editing your ~/.config/user-dirs.dirs configuration file.

If you are a system administrator or distribution vendor and want to set default locations for the default XDG directories, you can edit the /etc/xdg/user-dirs.defaults file to set global defaults that affect all users on the system (users can still adjust the settings however they like though).

Besides this change, the 0.20 release of xdg-user-dirs brings full support for the Meson build system (dropping Automake), translation updates, and some robustness improvements to its code. We also fixed the “arbitrary code execution from unsanitized input” bug that the Arch Linux Wiki mentions here for the xdg-user-dirs utility, by replacing the shell script with a C binary.

Thanks to everyone who contributed to this release!

This post attempts to explain how Huion tablet devices currently integrate into the desktop stack. I'll touch a bit on the Huion driver and the OpenTablet driver but primarily this explains the intended integration[1]. While I have access to some Huion devices and have seen reports from others, there are likely devices that are slightly different. Huion's vendor ID is also used by other devices (UCLogic and Gaomon) so this applies to those devices as well.

This post was written without AI support, so any errors are organic artisian hand-crafted ones. Enjoy.

First, a short overview of the ideal graphics tablet stack in current desktops. At the bottom is the physical device which contains a significant amount of firmware. That device provides something resembling the HID protocol over the wire (or bluetooth) to the kernel. The kernel typically handles this via the generic HID drivers [2] and provides us with an /dev/input/event evdev node, ideally one for the pen (and any other tool) and one for the pad (the buttons/rings/wheels/dials on the physical tablet). libinput then interprets the data from these event nodes, passes them on to the compositor which then passes them via Wayland to the client. Here's a simplified illustration of this:

Unlike the X11 api, libinput's API works both per-tablet and per-tool basis. In other words, when you plug in a tablet you get a libinput device that has a tablet tool capability and (optionally) a tablet pad capability. But the tool will only show up once you bring it into proximity. Wacom tools have sufficient identifiers that we can a) know what tool it is and b) get a unique serial number for that particular device. This means you can, if you wanted to, track your physical tool as it is used on multiple devices. No-one [3] does this but it's possible. More interesting is that because of this you can also configure the tools individually, different pressure curves, etc. This was possible with the xf86-input-wacom driver in X but only with some extra configuration, libinput provides/requires this as the default behaviour.

The most prominent case for this is the eraser which is present on virtually all pen-like tools though some will have an eraser at the tail end and others (the numerically vast majority) will have it hardcoded on one of the buttons. Changing to eraser mode will create a new tool (the eraser) and bring it into proximity - that eraser tool is logically separate from the pen tool and can thus be configured differently. [4]

Another effect of this per-tool behaviour is also that we know exactly what a tool can do. If you use two different styli with different capabilities (e.g. one with tilt and 2 buttons, one without tilt and 3 buttons), they will have the right bits set. This requires libwacom - a library that tells us, simply: any tool with id 0x1234 has N buttons and capabilities A, B and C. libwacom is just a bunch of static text files with a C library wrapped around those. Without libwacom, we cannot know what any individual tool can do - the firmware and kernel always expose the capability set of all tools that can be used on any particular tablet. For example: wacom's devices support an airbrush tool so any tablet plugged in will announce the capabilities for an airbrush even though >99% of users will never use an airbrush [5].

The compositor then takes the libinput events, modifies them (e.g. pressure curve handling is done by the compositor) and passes them via the Wayland protocol to the client. That protocol is a pretty close mirror of the libinput API so it works mostly the same. From then on, the rest is up to the application/toolkit.

Notably, libinput is a hardware abstraction layer and conversion of hardware events into others is generally left to the compositor. IOW if you want a button to generate a key event, that's done either in the compositor or in the application/toolkit. But the current versions of libinput and the Wayland protocol do support all hardware features we're currently aware of: the various stylus types (including Wacom's lens cursor and mouse-like "puck" devices) and buttons, rings, wheels/dials, and touchstrips on pads. We even support the rather once-off Dell Canvas Totem device.

Huion's devices are HID compatible which means they "work" out of the box but they come in two different modes, let's call them firmware mode and tablet mode. Each tablet device pretends to be three HID devices on the wire and depending on the mode some of those devices won't send events.

This is the default mode after plugging the device in. Two of the HID devices exposed look like a tablet stylus and a keyboard. The tablet stylus is usually correct (enough) to work OOTB with the generic kernel drivers, it exports the buttons, pressure, tilt, etc. The buttons and strips/wheels/dials on the tablet are configured to send key events. For example, the Inspiroy 2S I have sends b/i/e/Ctrl+S/space/Ctrl+Alt+z for the buttons and the roller wheel sends Ctrl-/Ctrl= depending on direction. The latter are often interpreted as zoom in/out so hooray, things work OOTB. Other Huion devices have similar bindings, there is quite some overlap but not all devices have exactly the same key assignments for each button. It does of course get a lot more interesting when you want a button to do something different - you need to remap the key event (ideally without messing up your key map lest you need to type an 'e' later).

The userspace part is effectively the same, so here's a simplified illustration of what happens in kernel land:

Any vendor-specific data is discarded by the kernel (but in this mode that HID device doesn't send events anyway).If you read a special USB string descriptor from the English language ID, the device switches into tablet mode. Once in tablet mode, the HID tablet stylus and keyboard devices will stop sending events and instead all events from the device are sent via the third HID device which consists of a single vendor-specific report descriptor (read: 11 bytes of "here be magic"). Those bits represent the various features on the device, including the stylus features and all pad features as buttons/wheels/rings/strips (and not key events!). This mode is the one we want to handle the tablet properly. The kernel's hid-uclogic driver switches into tablet mode for supported devices, in userspace you can use e.g. huion-switcher. The device cannot be switched back to firmware mode but will return to firmware mode once unplugged.

Once we have the device in tablet mode, we can get true tablet data and pass it on through our intended desktop stack. Alas, like ogres there are layers.

Historically and thanks in large parts to the now-discontinued digimend project, the hid-uclogic kernel driver did do the switching into tablet mode, followed by report descriptor mangling (inside the kernel) so that the resulting devices can be handled by the generic HID drivers. The more modern approach we are pushing for is to use udev-hid-bpf which is quite a bit easer to develop for. But both do effectively the same thing: they overlay the vendor-specific data with a normal HID report descriptor so that the incoming data can be handled by the generic HID kernel drivers. This will look like this:

Notable here: the stylus and keyboard may still exist and get event nodes but never send events[6] but the uclogic/bpf-enabled device will be proper stylus/pad event nodes that can be handled by libinput (and thus the rest), with raw hardware data where buttons are buttons.

Because in true manager speak we don't have problems, just challenges. And oh boy, we collect challenges as if we'd be organising the olypmics.

First and probably most embarrassing is that hid-uclogic has a different way of exposing event nodes than what libinput expects. This is largely my fault for having focused on Wacom devices and internalized their behaviour for long years. The hid-uclogic driver exports the wheels and strips on separate event nodes - libinput doesn't handle this correctly (or at all). That'd be fixable but the compositors also don't really expect this so there's a bit more work involved but the immediate effect is that those wheels/strips will likely be ignored and not work correctly. Buttons and pens work.

hid-uclogic being a kernel driver has access to the underlying USB device. The HID-BPF hooks in the kernel currently do not, so we cannot switch the device into tablet mode from a BPF, we need it in tablet mode already. This means a userspace tool (read: huion-switcher) triggered via udev on plug-in and before the udev-hid-bpf udev rules trigger. Not a problem but it's one more moving piece that needs to be present (but boy, does this feel like the unix way...).

By far the most annoying part about anything Huion is that until relatively recently (I don't have a date but maybe until 2 years ago) all of Huion's devices shared the same few USB product IDs. For most of these devices we worked around it by matching on device names but there were devices that had the same product id and device name. At some point libwacom and the kernel and huion-switcher had to implement firmware ID extraction and matching so we could differ between devices with the same 0256:006d usb IDs. Luckily this seems to be in the past now with modern devices now getting new PIDs for each individual device. But if you have an older device, expect difficulties and, worse, things to potentially break after firmware updates when/if the firmware identification string changes. udev-hid-bpf (and uclogic) rely on the firmware strings to identify the device correctly.

edit: and of course less than 24h after posting this I process a bug report about two completely different new devices sharing one of the product IDs

Because we have a changeover from the hid-uclogic kernel driver to the udev-hid-bpf files there are rough edges on "where does this device go". The general rule is now: if it's not a shared product ID (see above) it should go into udev-hid-bpf and not the uclogic driver. Easier to maintain, much more fire-and-forget. Devices already supported by udev-hid-bpf will remain there, we won't implement BPFs for those (older) devices, doubly so because of the aforementioned libinput difficulties with some hid-uclogic features.

The newer tablets are always slightly different so we basically need to reverse-engineer each tablet to get it working. That's common enough for any device but we do rely on volunteers to do this. Mind you, the udev-hid-bpf approach is much simpler than doing it in the kernel, much of it is now copy-paste and I've even had quite some success to get e.g. Claude Code to spit out a 90% correct BPF on its first try. At least the advantage of our approach to change the report descriptor means once it's done it's done forever, there is no maintenance required because it's a static array of bytes that doesn't ever change.

Because we're abstracting the hardware, userspace needs to be fully plumbed. This was a problem last year for example when we (slowly) got support for relative wheels into libinput, then wayland, then the compositors, then the toolkits to make it available to the applications (of which I think none so far use the wheels). Depending on how fast your distribution moves, this may mean that support is months and years off even when everything has been implemented. On the plus side these new features tend to only appear once every few years. Nonetheless, it's not hard to see why the "just sent Ctrl=, that'll do" approach is preferred by many users over "probably everything will work in 2027, I'm sure".

A currently unsolved problem is the lack of tool IDs on all Huion tools. We cannot know if the tool used is the two-button + eraser PW600L or the three-button-one-is-an-eraser-button PW600S or the two-button PW550 (I don't know if it's really 2 buttons or 1 button + eraser button). We always had this problem with e.g. the now quite old Wacom Bamboo devices but those pens all had the same functionality so it just didn't matter. It would matter less if the various pens would only work on the device they ship with but it's apparently quite possible to use a 3 button pen on a tablet that shipped with a 2 button pen OOTB. This is not difficult to solve (pretend to support all possible buttons on all tools) but it's frustrating because it removes a bunch of UI niceties that we've had for years - such as the pen settings only showing buttons that actually existed. Anyway, a problem currently in the "how I wish there was time" basket.

Overall, we are in an ok state but not as good as we are for Wacom devices. The lack of tool IDs is the only thing not fixable without Huion changing the hardware[7]. The delay between a new device release and driver support is really just dependent on one motivated person reverse-engineering it (our BPFs can work across kernel versions and you can literally download them from a successful CI pipeline). The hid-uclogic split should become less painful over time and the same as the devices with shared USB product IDs age into landfill and even more so if libinput gains support for the separate event nodes for wheels/strips/... (there is currently no plan and I'm somewhat questioning whether anyone really cares). But other than that our main feature gap is really the ability for much more flexible configuration of buttons/wheels/... in all compositors - having that would likely make the requirement for OpenTabletDriver and the Huion tablet disappear.

The final topic here: what about the existing non-kernel drivers?

Both of these are userspace HID input drivers which all use the same approach: read from a /dev/hidraw node, create a uinput device and pass events back. On the plus side this means you can do literally anything that the input subsystem supports, at the cost of a context switch for every input event. Again, a diagram on how this looks like (mostly) below userspace:

Note how the kernel's HID devices are not exercised here at all because we parse the vendor report, create our own custom (separate) uinput device(s) and then basically re-implement the HID to evdev event mapping. This allows for great flexibility (and control, hence the vendor drivers are shipped this way) because any remapping can be done before you hit uinput. I don't immediately know whether OpenTabletDriver switches to firmware mode or maps the tablet mode but architecturally it doesn't make much difference.

From a security perspective: having a userspace driver means you either need to run that driver daemon as root or (in the case of OpenTabletDriver at least) you need to allow uaccess to /dev/uinput, usually via udev rules. Once those are installed, anything can create uinput devices, which is a risk but how much is up for interpretation.

[1] As is so often the case, even the intended state does not necessarily spark joy

[2] Again, we're talking about the intended case here...

[3] fsvo "no-one"

[4] The xf86-input-wacom driver always initialises a separate eraser tool even if you never press that button

[5] For historical reasons those are also multiplexed so getting ABS_Z on a device has different meanings depending on the tool currently in proximity

[6] In our udev-hid-bpf BPFs we hide those devices so you really only get the correct event nodes, I'm not immediately sure what hid-uclogic does

[7] At which point Pandora will once again open the box because most of the stack is not yet ready for non-Wacom tool ids

It may sound unbelievable to some, but not everyone has a datacenter beast with 128GB of VRAM shoved in their desktop PCs. Around the world people tell the tale of a particularly fierce group of Linux gamers: Those who dare attempt to play games with only 8 gigabytes of VRAM, or even less. Truly, it takes exceedingly strong resilience and determination to face the stutters and slowdowns bound to occur when the system starts running low on free VRAM. Carnage erupts inside the kernel driver as every application fights for as much GPU memory as it can hold on to. Any game caught up in this battle for resources will surely not leave unscathed.

That is, until now. Because I fixed it.

A: You need some kernel patches as well as additional utilities to make use of the kernel capabilities properly.

The simplest option is to use CachyOS (with KDE as your desktop). Their kernel includes the patches you need from version 7.0rc7-2 and up, and the userspace utilities are available in the

package repositories. All you need to do is use CachyOS’s 7.0rc7-2 kernel, install the packages called dmemcg-booster and plasma-foreground-booster, and you should be good to go.

UPDATE: CachyOS’s 6.19.12 kernel also includes this, now. No need to use the -rc kernel anymore.

The dmemcg-booster and plasma-foreground-booster utilities are available in the AUR as well (plasma-foreground-booster carries the package name plasma-foreground-booster-dmemcg), so you can install them from there.

For the kernel side, you can either use the CachyOS kernel package on a non-CachyOS system by retrieving the package from their repository,

or you can compile your own kernel. Installing linux-dmemcg from the AUR will compile the development branch I used to develop this.

Being a development branch, this carries the risk of some stuff being broken, so install at your own risk!

If you want to apply the kernel patches yourself, you need these six .patch files:

Patch 1

Patch 2

Patch 3

Patch 4

Patch 5

Patch 6

I’m not sure how easily they apply on specific kernel versions, but feel free to leave a comment if you run into issues and I’ll try to help out.

Maybe wait a bit. Eventually I’d expect this to trickle down into more distros. If I notice this work being packaged by other distros or being installable by other means, I will update this blogpost.

A: For games where you care about VRAM usage, you can use newer versions of gamescope. Newer versions of gamescope will also try to make use of these kernel capabilities,

so running your games through that should be sufficient. You will still need the dmemcg-booster utility in any case.

A: All the user-space utilities hard-depend on systemd. Without systemd, you’d need to write your own utilities that make use of my kernel patches. Something needs to manage cgroups in your system, and that something needs to enable the right cgroup controllers and set the right limits (see also the long-winded explanation about how this works).

Let’s first look at what problems we actually run into when we have games running on GPUs with little VRAM.

On a standard desktop system, the game won’t be the only application that runs on the GPU at a time at all. If it’s anything like my system, there’s always at least one browser window with way too many tabs open, plus an assortment of apps (many of which are actually web apps running in their own browsers under the hood). All of this eats up quite a bit of VRAM, as well.

To properly stress-test kernel memory management when working on this issue, I would go ahead and open up nearly every app with an integrated browser engine that I had installed. Viewed in amdgpu_top, the result of that looks something like this:

![]()

Ouch, there goes 1/4 of VRAM. Now, let’s try and launch Cyberpunk 2077 on top of that:

![]()

As expected, the game uses a lot of VRAM (I cranked the settings really high). However, a lot of memory allocations also end up in a memory region referred to as “GTT”. This is memory that is accessible by the GPU, but physically located in system RAM. From the GPU’s point of view, system RAM memory has to be accessed over the PCI bus. Accessing memory over the PCI bus is typically really, really slow. On my system, instead of the 256GB/s bandwidth VRAM could provide, we’re suddenly stuck with a meager 16GB/s at absolute maximum, paired with significantly worse latency.

Some amount of memory landing in GTT is normal - many games will intentionally allocate memory in GTT because it is advantageous for some use cases. However, Cyberpunk 2077 allocates a fixed amount of around 650MB of memory in GTT. Instead, what happened here is that the game requested some memory allocations in VRAM, but somehow, they ended up in GTT instead!

In kernel land, this process is referred to as eviction. The system in total tried to use more VRAM than there was available at all, so something had to give. Instead of telling the app that memory allocation failed (which would mean a near-certain application crash), the kernel decides to kick some memory out of VRAM to make everything fit. This degrades performance, but at least it allows every app to continue running. Nice! If only it would evict literally anything other than the game, which is the very thing that suffers the worst from having its memory evicted. Why in the world would it decide on that????

Memory eviction and behavior under VRAM pressure are by no means new issues. Over the course of time, different approaches have tried tackling different associated issues, and those different approaches introduced new issues themselves.

In the beginning, things worked rather simplistically: If applications wanted VRAM allocations, the user-mode driver would go to the kernel-mode driver and request VRAM memory. Save for some exceptional cases, that request would be granted, and that memory would be kept in VRAM. If another application requested VRAM allocations, and the memory was kicked out, the kernel driver would move the memory back into VRAM the next time work was submitted to the GPU using that memory.

This worked quite horribly. Generally, two competing applications can be expected to roughly take turns executing GPU work - first one application submits work, then the other, then the first again, and so on. With that approach, memory would keep being moved back and forth after every single submission. One application gets kicked out and immediately moved back in, kicking the other out (which moves memory back in the next step). All this moving ended up with worse performance than if the memory had never been moved in the first place.

The first bandaid solution was to rate-limit memory movement inside the kernel driver. Once the kernel driver moved enough memory within a specific time frame to trigger a limit, no more memory would be moved for some more time. This indeed reduced moves, but didn’t do anything to fix the underlying issue of repeated cyclic memory movements. Worse yet, repeatedly running into this ratelimit would introduce annoying jitters and stutters as the kernel driver rapidly alternated between moving memory and doing nothing.

Eventually, to combat the still-existing overhead of repeatedly moving memory, user-mode drivers changed their allocation strategy. Instead of specifying VRAM as the only acceptable domain to place the allocation in, every VRAM allocation request would specify both “VRAM” and “GTT” as possible memory domains. The kernel would interpret this as VRAM being preferred, but if there was no space, GTT was an acceptable fallback and the kernel wouldn’t try to kick out other VRAM memory to make space.

This change entirely stopped the issue with memory repeatedly moving in and out of VRAM. However, if you squint your eyes a bit, you can see the kernel conceptually performing an eviction here, too. If there is no space in VRAM, the newly allocated memory is immediately evicted. This “eviction” is incredibly cheap to perform, since you don’t actually need to move any memory, but the result is all the same: Memory that would ideally be in VRAM ends up in GTT.

This case is what we run into in Cyberpunk 2077 above. At some point, VRAM is full, and new allocations done by the game go straight to GTT. Clearly, that is the wrong decision to make here. But being more aggressive wouldn’t really work either - that was the approach before, and it was even worse. So what is the right decision here?

There is no single right decision to make here. Being aggressive is wrong, and not being aggressive is wrong, too. To be more specific, they’re wrong in different cases. It makes complete sense for a game to be aggressive, but it makes no sense for random background apps to be equally aggressive. Random background apps should not be aggressive at all, but if the game backs off equally quickly, that doesn’t help much either.

The real problem is that to the kernel driver, all memory looks the same. The kernel doesn’t know if it’s dealing with a highly-important object from a game or a static image from a random web app running in the background - all it sees is a list of buffers. As long as all buffers look the same, it is impossible to have the same approach work well for every one of all the wildly different situations a driver may encounter.

cgroups are cool. They’re super great at organizing random batches of processes into single organizational pieces. If you make a “compile job” cgroup and put the make process in it, all compiler processes it spawns will be part of that cgroup too.

Don’t want a big build hogging up all your RAM? Set a limit with the cgroup memory controller. Want to have some CPU time for other things? Just set a CPU limit with the cgroup cpu controller. It’s great. You can have cgroup hierarchies too, and represent almost any kind of complex resource

distribution you want.

Luckily, systemd agrees that cgroups are cool. Every systemd unit is actually represented with its own cgroup, as well. And, as it happens, desktop environments will represent each desktop app as a systemd unit.

How convenient! Complex resource distribution sounds exactly like the problems we’re having in GPU driver land. If only someone wrote a cgroup controller operating on memory allocations from arbitrary devices such as GPUs…

![]()

cgroups are a very clean solution for figuring out how relatively important GPU memory allocations are. Some time after Maarten Lankhorst from Intel initially wrote a cgroup controller managing GPU memory (initially only made for limiting how much VRAM one cgroup is allowed to consume), he pointed me to this work as a possible solution for the VRAM issues I was investigating. Eventually, this resulted in the dmem cgroup controller, written by Maarten, Maxime Ripard from Red Hat, and me.

With the dmem cgroup controller, the kernel now learns about “memory protection”. Memory being “protected” merely means that the kernel will go to significant lengths to avoid evicting that memory. For example, it may try to find memory from a different cgroup that is not protected and evict that instead. cgroups are all about resource partitioning, so for a cgroup, you can assign a “protection limit” - that is, if a cgroup’s memory usage is below that limit, its memory is protected. As soon as it exceeds the limit, the memory ceases to be protected and can more easily be evicted. This roughly corresponds to the “more aggressive” and “less aggressive” behaviors we used to have, but now we can have some applications (=cgroups) that are more aggressive and some that are less aggressive. Precisely what we wanted!

The dmem cgroup controller has been upstream for a while now, but for memory protection to work properly in gaming scenarios and such, you will likely still need my kernel patches.

Remember how Cyberpunk 2077 ends up with its memory in GTT because the kernel driver sees that VRAM is exhausted and puts new memory in GTT right away? I argued this is conceptually equivalent to an eviction, but under the hood, this and real evictions that move existing memory from VRAM to GTT work very differently. Among other things, protection by dmem cgroups did not apply to these “evictions” - this is what my kernel patches fix. Without them, the kernel is still not aggressive enough even if there is protection, and allocations will still end up in GTT.

Maybe the best thing about cgroups for VRAM management is that the prioritization is completely dynamic and configurable by userspace. Window managers can now determine whichever app is in the foreground and dedicate the highest priority to that app via its cgroup, completely without having to teach the GPU driver what a “window” or “foreground” is. For desktops, this is an important heuristic, but it’s totally not the kernel’s business to know the concept of a foreground app.

I personally use KDE Plasma as my desktop environment, so I went looking for how such a thing could be integrated into Plasma. Lo and behold, it was already done! Plasma people already developed the ForegroundBooster utility that listens to which app is currently in the foreground, and tries to give it higher prioritization (in this case: wrt. CPU time) than other apps. This prioritization was also done via cgroups, so adding VRAM prioritization in my fork was pretty much a walk in the park.

Except for one thing - the ForegroundBooster utility doesn’t manage cgroups and cgroup properties directly. systemd is responsible for managing cgroups, so ForegroundBooster just communicates with systemd to set the cgroup properties. That’s not too bad though, let’s just implement support for the dmem cgroup controller in systemd, right?

Well, this is what I thought, too. But as I alluded to before, fixing VRAM management for gaming purposes is by far not the only possible purpose of dmem cgroups. There are quite a few other use cases that people are eyeing dmem cgroups for, and if I were to implement a systemd interface while only considering the gaming scenario, the other use cases run the risk of having to deal with a systemd interface that wasn’t designed with that use case in mind at all. So for now, a common systemd implementation seems mostly off-limits until the dust has settled some more.

What do we do if we can’t tell systemd to do the thing we want? That’s right, we do it anyway, but behind systemd’s back. (Sorry, systemd.)

This is what the final piece of the puzzle, dmemcg-booster does (safely and 🚀blazingly fast🚀). After systemd constructs the cgroup hierarchy, dmemcg-booster goes over those cgroups and additionally enables the dmem controller on them, in order to activate the kernel functionality

that ultimately allows for GPU memory protection on those cgroups. While at it, it also sets some settings in the cgroup hierarchy that allow the memory protection to kick in properly.

Of course, this is a rather ugly stopgap. Once systemd gains proper support, you’d express all this with drop-in unit configurations, which is a much prettier approach. The dmemcg-booster utility is exclusively there to bridge the gap until that proper support happens.

With all the puzzle pieces finally in place, let’s repeat our test from before, launch a bunch of heavy apps, and then play Cyberpunk 2077 on top of that. How does it look now?

![]()

GTT memory usage is now down to 650MB, i.e. only the memory that the game explicitly allocated in system RAM itself. Not a single piece of memory got spilled!

Prioritization via cgroups now allows the game to use pretty much every last byte of VRAM for actual gaming purposes. It’s a bit hard to compare precise numbers on how the game performs, because the VRAM shortage slowly develops over time as you run around in the game, but the improvement should be obvious when comparing how games feel when you play them for a while. Instead of performance slowly degrading over time, games should perform much more stable - as long as the game itself doesn’t use more VRAM than you actually have. Generally, it seems like even modern games stay within a memory budget of ~8GB or a bit less, so if you have a GPU with 8GB of VRAM, you should be good to go with today’s games.

Whether or not your GPU can benefit from it depends on the kernel driver - more specifically, whether it sets up the dmem cgroup controller.

amdgpu and xe both have support for the dmem cgroup controller already. In theory, Intel GPUs running the xe kernel driver should benefit as well, although I’m not sure anyone tested this yet.

For nouveau, I have sent a patch for dmem cgroup support to the mailing lists.

This patch is also included in my development branch, so if you use my AUR package it should work. In other cases, you will need to wait for the patch to be picked up by your distribution, or apply it yourself.

The proprietary NVIDIA kernel modules do not support dmem cgroups yet, so this won’t work there.

I don’t actually know :)

The main problem (system RAM being slower than dedicated VRAM) does not exist on integrated GPUs, because they use system RAM for everything - so effects will most likely be more limited than on dGPUs. Maybe it still has some benefit? It probably requires careful testing to find out.

No, they haven’t arrived in upstream Linux yet, but I’ve sent them to the mailing lists and things are in-progress. I’ll update this when I know which mainline Linux release will pick these patches up.

I'm doing a podcast recording this week, so I wanted to run some numbers so I could have some facts rather than feels. It turns out my feels were off by a factor of 3 or so.

If asked, I've always said the contributor count to the drm subsystem is probably in the 100 or so developers per release cycle.

Did the simplest:

git log --format='%aN' v6.14..v6.15 drivers/gpu/drm/ include/uapi/drm/ include/drm/ | sort -u | wc -l

Iterated over a few kernel releases

v6.15 326

v6.16 322

v6.17 300

v6.18 334

v6.19 332

v7.0-rc6 346

The number for the complete kernel in those scenarios are ~2000 usually, which means drm subsystem has around 15-16% of the kernel contributors.

I'm a bit spun out, that's quite a lot of people. I think I'll blame Sima for it. This also explains why I'm a bit out of touch with the process problems other maintainers have, and when I say stuff like a lot of workflows don't scale, this is what I mean.

I published three Rust crates:

name_to_handle_at and open_by_handle_at system callsThey might seem like rather arbitrary, unconnected things – but there is a connection!

systemd socket activation passes file descriptors and a bit of metadata as environment variables to the activated process. If the activated process exec’s another program, the file descriptors get passed along because they are not CLOEXEC. If that process then picks them up, things could go very wrong. So, the activated process is supposed to mark the file descriptors CLOEXEC, and unset the socket activation environment variables. If a process doesn’t do this for whatever reason however, the same problems can arise. So there is another mechanism to help prevent it: another bit of metadata contains the PID of the target. Processes can check it against their own PID to figure out if they were the target of the activation, without having to depend on all other processes doing the right thing.

PIDs however are racy because they wrap around pretty fast, and that’s why nowadays we have pidfds. They are file descriptors which act as a stable handle to a process and avoid the ID wrap-around issue. Socket activation with systemd nowadays also passes a pidfd ID. A pidfd ID however is not the same as a pidfd file descriptor! It is the 64 bit inode of the pidfd file descriptor on the pidfd filesystem. This has the advantage that systemd doesn’t have to install another file descriptor in the target process which might not get closed. It can just put the pidfd ID number into the $LISTEN_PIDFDID environment variable.

Getting the inode of a file descriptor doesn’t sound hard. fstat(2) fills out struct stat which has the st_ino field. The problem is that it has a type of ino_t, which is 32 bits on some systems so we might end up with a process identifier which wraps around pretty fast again.

We can however use the name_to_handle syscall on the pidfd to get a struct file_handle with a f_handle field. The man page helpfully says that “the caller should treat the file_handle structure as an opaque data type”. We’re going to ignore that, though, because at least on the pidfd filesystem, the first 64 bits are the 64 bit inode. With systemd already depending on this and the kernel rule of “don’t break user-space”, this is now API, no matter what the man page tells you.

So there you have it. It’s all connected.

Obviously both pidfds and name_to_handle have more exciting uses, many of which serve my broader goal: making Varlink services a first-class citizen. More about that another time.

On March 17 we released systemd v260 into the wild.

In the weeks leading up to that release (and since then) I have posted a series of serieses of posts to Mastodon about key new features in this release, under the #systemd260 hash tag. In case you aren't using Mastodon, but would like to read up, here's a list of all 21 posts:

I intend to do a similar series of serieses of posts for the next systemd release (v261), hence if you haven't left tech Twitter for Mastodon yet, now is the opportunity.

My series for v261 will begin in a few weeks most likely, under the #systemd261 hash tag.

In case you are interested, here is the corresponding blog story for systemd v259, here for v258, here for v257, and here for v256.

More and more frequently, I get asked about my stance on AI in the context of programming. This is my attempt to summarize my stance for those who wonder.

This is a blog post that I don’t want to write, but some recent developments have more or less forced my hand here. I would have preferred to keep pretending that I’m neutral in the issue, and just hoping that the problem goes away. But that doesn’t seem to be happening.

I’m probably not the most qualified person to write about this, I’m sure you can find better informed articles out there. These are my personal opinions, and not those of my employer, their customers or any other of my affiliates. Take them with a pinch of salt, and feel free to disagree.

On a general note, I’m very reluctant to tell people how they should behave. But in this case, I’ve decided to do just that. I hope it’s clear why from the context.

Similarly, I would caution every reader to be skeptical of anyone who claims to know what the future holds, me included. People often predict the future that benefits themselves the most. There’s a few times I make some predictions in this post. Those are just predictions, and I might very well be wrong.

A final warning; this is a long post, so set aside some time. I’ve tried to limit the scope somewhat to mostly cover topics concerning open source development, but I sometimes end up discussing wider issues. This is simply because I don’t feel like I can ignore these.

But yeah, let’s start close to home here…

Currently, the legal status of AI generated code is still far from clear. Is it derivative work of all of the training data or not? It sometimes can be, but it depends on a lot of factors.

This is a critical issue to open source; I can’t submit code somewhere that I don’t know the origin of and potentially is license-incompatible with the upstream project. This isn’t just theoretical, Copilot has been known to output Quake 3 source code with the wrong license. It doesn’t matter if the people with the most to lose from AI output counting as derivative works keeps insisting not to worry.

The US supreme court recently made it clear that AI generated code isn’t even copyright-able. A human needs to write the code for copyright to be granted. But with using AI tools, we’re blurring the line, making it hard or even impossible to tell what is written by a human and what isn’t.

I also doubt that a single or a handful of lawsuits is going to be enough to settle this. We’re working in a global ecosystem, and there’s potentially hundreds of jurisdictions that might have to rule, and just as many subtleties to take into account before we have a good understanding around this. It’s going to take a long time to find out.

But even if this wasn’t an issue, does that really mean we should use AI? Open source software is inherently political, especially when it comes to licensing. I tend to find something not being illegal to be a terribly low standard to have. It should IMO also be the right thing to do. This brings us to the other issues…

As open source developers, it’s important for our infrastructure to be publicly available to everyone. In recent times, AI scrapers have started taking advantage of this, and are now aggressively scraping all content on open source support infrastructure so they can train their models. These scrapers often ignore robots.txt directives, and sometimes even use randomized, residential IP addresses, making it hard or impossible to effectively block them.

All of this has a major financial impact on open source projects. It’s not unusual to see over 90% of traffic provably coming from AI scrapers.

As a result, the open source community has had to introduce barriers, like Anubis, which slows down initial page-loads. Since Anubis is based on proof-of-work, it means people with slow computers can no longer reach our infrastructure. And I can’t reliably browse our GitLab instance from my phone on the bus to work.

While the latter is a minor annoyance, the former is a real problem for inclusivity.

And because our infrastructure is so heavily affected by this, it feels deeply problematic to me if we use (and pay for) the tools that are built on this behavior. That would be rewarding the behavior. We should vote with our wallets here, and in this case this means to not pay them.

Another issue is that code needs to be understood and maintained in the long term. For this to work well, we need to be able to reach out to the people that wrote the code and get input on what led to a decision. Obviously, that’s not always possible, but with AI this is almost never possible. The context is lost, and so is all the insight. Asking an AI again about the same code might lead to completely different reasoning, and miss crucial details.

The project I’m mostly working on, Mesa 3D, is also arguably critical infrastructure for a lot of computer systems around the world. We need to be lean towards being conservative rather than experimental when building these kinds of systems.

Another related issue is that AI technology tends to be used to take over more “junior” tasks, but the result of this is likely to be that we end up hiring and mentoring fewer junior developers. This will lead us to having fewer competent senior developers in the future.

Interacting with an AI isn’t going to gradually make the AI learn and become more senior, unlike with a junior developer. AIs learn from training, not queries. Mentoring junior developers builds trust, which makes the interactions worthwhile also for the senior. In my experience, interactions with AIs are little other than frustration that never improves. And because working with the AI doesn’t build any meaningful trust, the AIs will always need guard-rails to prevent disasters.

A future where we develop software with few to no human developers (junior or senior) sounds scary to me, but that’s where this path leads.

Building and running these huge data-centers is extremely resource heavy. Some of these resources are resources we all have to share on this planet, like water, electricity and rare earth minerals. This is taking a toll on our planet and everything living on it.

I feel like this point got a lot less attention recently than it used to, but it hasn’t really been solved. Instead, the AI giants have just doubled down on wanting to consume all the resources they feel they need, without regard for the planet or people living on it. They are far from truthful about how bad this is, and try to prevent us from knowing just how bad it is.

The truth is that AI-type solutions are almost always one of the most resource intensive solutions to problems possible. And right now we’re being told that we should use it for all problems. This is a recipe for disaster, nothing less.

And it seems like there’s nothing being done on this front. The big AI companies are just slowly boiling the ocean, hoping that we don’t notice or that we forget. I haven’t forgotten.

A secondary effect of building all these data-centers is that demand for a bunch of resources goes up, and so does the price. This affects everyone.

We’re not just seeing electricity and water being more scarce, we’re also seeing memory and storage prices spiking hard as well. Forget about buying a new GPU, and just generally wait a couple of years with buying a new computer, or really any new gadgets.

How can this possibly not lead to a recession if things are allowed to continue?

And then we have the blatant circular economy that the big AI players are doing to try to convince the market this is actually profitable. In reality, very little actual money is changing hands, they’re mostly just making promises to buy tech from each other in the future… Which brings us to the big one…

Yeah, so it seems very likely we’re currently in a bubble. We’ve been for a while, and this bubble is going to pop. The question is when and how.

Don’t get me wrong; not all bubbles pop and erase everything with it. The dot-com bubble took years to pop, and we still have computers and the internet and all that jazz.

But we’re currently overspending on infrastructure, and the companies selling that are currently raking in, and they are trying hard to make us all dependent on their technology.

For the last few years, the AI industry has slurped up most of the traditional technology investment capital available. The investors seem less and less interested in investing more money into the AI industry, and want return on their investments instead. So they have started turning to things like pension funds. If they get away with this, everyone is going to pay for this, regardless of their involvement in AI.

We’ve already been seeing the idea of “too big to fail” being thrown out there, mirroring what happened in the subprime mortgage crisis. We should, as a society refrain from letting them do this. These problems are caused by the AI industry, not by us consumers. We shouldn’t be the ones to bail them out when the time comes.

OpenAI’s CFO has already suggested that the U.S. government should provide a $1.4 trillion “safety net” for AI investments, and while Sam Altman since has walked that back after public outcry, this shows that these companies are already thinking along those lines.

On a more personal note, it’s kinda undeniable; I’m getting older, and part of getting old means that I need to spend more time actively thinking about things to keep up. Letting an AI take over the wheels, even just for the boring bits doesn’t help me, it only makes this worse. Keeping the brain sharp requires work, not assistance.

In fact, I often feel like I learn something useful, even when I do mundane tasks. Asking an LLM to write up a python script for me to do something robs me of learning in the process of doing it myself.

Add that to the data that suggests that we actually get less productive by using AI (while thinking we’re more productive), makes this all very unappealing to me. My brain is my most important tool, and I’m not going to risk it because tech CEOs are yelling at the world that they need to use AI to prevent a recession.

You might have noticed that I don’t really address the technical abilities of current AI technologies in this post. The reason is that I don’t feel like I need to; it’s kinda irrelevant.

I think the moral arguments against using AI for open source development are just too large to ignore. In fact, just the licensing and environmental issues alone would probably have been enough for me to draw a hard line in the sand here:

Using AI for open source projects is in my opinion immoral, and I will not be using it. I do not condone others using AI for anything in the open source ecosystem either. Using it is simply detrimental to our values and directly harms our community.

If you’re currently playing around with AI out of curiosity for open source projects, I would like to ask you to reconsider. If you’re working in a company that’s encouraging AI usage, I would like to ask you to speak up against it. If you are involved in policy decisions for open source projects, I would like to encourage you to try your best to discourage AI adoption within those projects.

Our entire ecosystem is on the line here. Not just the open source ecosystem, but the entire, global ecosystem. And I feel there’s not enough voices speaking up about it.

Make your voice heard! Allow yourself to be angry; there’s enough nonsense going out there! We need to stop this madness.

As I talked about in a couple of blog posts now I been working a lot with AI recently as part of my day to day job at Red Hat, but also spending a lot of evenings and weekend time on this (sorry kids pappa has switched to 1950’s mode for now). One of the things I spent time on is trying to figure out what the limitations of AI models are and what kind of use they can have for Open Source developers.

One thing to mention before I start talking about some of my concrete efforts is that I more and more come to conclude that AI is an incredible tool to hypercharge someone in their work, but I feel it tend to fall short for fully autonomous systems. In my experiments AI can do things many many times faster than you ordinarily could, talking specifically in the context of coding here which is what is most relevant for those of us in the open source community.

So one annoyance I had for years as a Linux user is that I get new hardware which has features that are not easily available to me as a Linux user. So I have tried using AI to create such applications for some of my hardware which includes an Elgato Light and a Dell Ultrasharp Webcam.

I found with AI and this is based on using Google Gemini, Claude Sonnet and Opus and OpenAI codex, they all required me to direct and steer the AI continuously, if I let the AI just work on its own, more often than not it would end up going in circles or diverging from the route it was supposed to go, or taking shortcuts that makes wanted output useless.On the other hand if I kept on top of the AI and intervened and pointed it in the right direction it could put together things for me in very short time spans.

My projects are also mostly what I would describe as end leaf nodes, the kind of projects that already are 1 person projects in the community for the most part. There are extra considerations when contributing to bigger efforts, and I think a point I seen made by others in the community too is that you need to own the patches you submit, meaning that even if an AI helped your write the patch you still need to ensure that what you submit is in a state where it can be helpful and is merge-able. I know that some people feel that means you need be capable of reviewing the proposed patch and ensuring its clean and nice before submitting it, and I agree that if you expect your patch to get merged that has to be the case. On the other hand I don’t think AI patches are useless even if you are not able to validate them beyond ‘does it fix my issue’.

My friend and PipeWire maintainer Wim Taymans and I was talking a few years ago about what I described at the time as the problem of ‘bad quality patches’, and this was long before AI generated code was a thing. Wim response to me which I often thought about afterwards was “a bad patch is often a great bug report”. And that would hold true for AI generated patches to. If someone makes a patch using AI, a patch they don’t have the ability to code review themselves, but they test it and it fixes their problem, it might be a good bug report and function as a clearer bug report than just a written description by the user submitting the report. Of course they should be clear in their bug report that they don’t have the skills to review the patch themselves, but that they hope it can be useful as a tool for pinpointing what isn’t working in the current codebase.

Anyway, let me talk about the projects I made. They are all found on my personal website Linuxrising.org a website that I also used AI to update after not having touched the site in years.

Elgato Light GNOME Shell extension

Elgato Light GNOME Shell extension

The first project I worked on is a GNOME Shell extension for controlling my Elgato Key Wifi Lamp. The Elgato lamp is basically meant for podcasters and people doing a lot of video calls to be able to easily configure light in their room to make a good recording. The lamp announces itself over mDNS, and thus can be controlled via Avahi. For Windows and Mac the vendor provides software to control their lamp, but unfortunately not for Linux.